A Test of a Blended Method for Teaching Medical Coding

Meredith Whiteside, OD, FAAO, Shaokui Ge, PhD, Dennis Fong, OD, FAAO, Robert DiMartino, OD, MS, FAAO

Abstract

Background: Evaluation and Management (E/M) codes are used by all healthcare providers to bill third-party payers for their services. The purpose of this study is to determine whether providing education about E/M coding to third-year optometry students in a blended teaching format leads to improved coding accuracy.

Methods: Optometry student participants (N = 53) had the traditional classroom and clinical education in E/M coding throughout the first 2 years of the curriculum. Students were randomly divided into two groups. One group (the Experimental Group) received education regarding E/M coding in an online format as well as two interactive practice cases. The other group did not. All students were then asked to determine the E/M code on 5 standardized cases. Approximately 8 weeks later, a second set of 4 standardized cases was given to all participants to determine whether knowledge acquired was long-lasting.

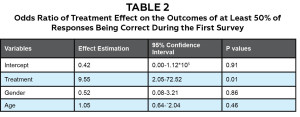

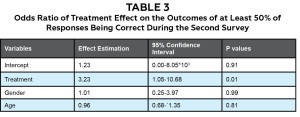

Results: By generalized linear mixed models, it was found that students in the Experimental Group were much more likely to indicate the correct E/M code than the students in the Control Group, with an odds ratio of 9.55 (95% confidence level 2.05-72.52; P = 0.01). After 7-9 weeks, the treatment effect was still significant, but it was reduced, and the odds ratio was 3.23 (95% confidence interval 1.05-10.68, P = 0.01).

Conclusions: A blended format of teaching E/M coding is at least initially effective in improving coding ability. However, without further intervention, the effect decreases over time. Because of this, additional reinforcement of coding is needed, likely in both the didactic curriculum and the clinic.

Key Words: CPT codes, evaluation and management codes, blended teaching

Introduction/Background

According to the American Optometric Association, in 2013 more than half of the gross income in private optometric practices was derived from third-party insurance plans.1 This revenue could be further subdivided into almost an even split between payments from public plans, such as Medicare and Medicaid, and those from private insurance programs, including managed care and preferred provider networks. As the patient population ages, and with the full implementation of the Affordable Care Act (i.e., “ObamaCare”), the percentage of optometric revenue attributed to third-party plans seems certain to rise. Thus, an important aspect of preparing optometry students to transition successfully from the teaching clinic to private practice is ensuring they are trained to code and bill third-party payers efficiently and accurately.

While we are unaware of any published reports for optometry, studies from other health professions show that documentation and appropriate coding and billing are important skills in clinical practice.2-5 The Accreditation Council for Graduate Medical Education now lists awareness of and responsiveness to the larger system of health care as 1 of 6 general competencies required for resident development.6 In other words, medical residents, like other healthcare providers must be knowledgeable about coding and reimbursement.2,7,8,10

Current Procedural Terminology, or CPT, codes are an elaborate system of individual Evaluation and Management (E/M) codes used by all healthcare providers to bill third-party payers for their services. Determining the correct E/M code to be applied to a specific clinical case from the array of CPT codes requires the clinician to understand complex Medicare rules and to know what must be recorded in a patient’s records to justify billing under a particular code. Despite the importance of learning accurate documentation and coding, there currently are no evidence-based accepted standards for teaching these skills in either optometric or medical education programs. Although there is no literature indicating how optometry students are taught coding, informal discussions with optometric educator colleagues indicate that, as in medical residency programs, coding and billing education typically involves a limited number of formal lectures (<4 hours) followed by the informal provision of additional information during patient care encounters.

While it would seem logical to teach E/M coding as part of everyday clinical teaching, previous studies suggest that this can be difficult. Teaching coding requires that time be allocated for this task. This inevitably erodes the time allotted for patient care, which has the potential to reduce the number of patient encounters. This is supported by previous studies showing that teaching E/M coding during patient care has a negative impact on student evaluations of clinical teaching and on the delivery of patient care.9,12-14 Finally, while it might be suggested that acquiring the skill of coding and billing accurately can be put off until patient care has been mastered, evidence shows substantial error rates in billing are found even in highly experienced clinicians, including clinical faculty members and community doctors.10,11,15,16

As the cost for health care rises, third-party payers are experiencing increasing political and financial scrutiny to control their costs by insisting that caregivers bill accurately and have the appropriate supporting documentation. When billing errors — either over- or under-billing — are discovered, they can have significant financial repercussions. Each type of mistake is considered Medicare fraud and can result in costly audits and legal consequences.17 Coding’s importance to financial success in private practice, the heightened concerns of third-party payers about eyecare costs and coding errors, and the financial losses that can result from these errors, all provide a strong rationale for strengthening the teaching of coding in professional optometry programs. However, given the curricular time demands of professional training, it is important to identify the most effective methods for teaching coding.

The hypothesis is that utilization of a blended format of teaching (where at least part of the delivery of content and instruction is via computer-mediated activities in addition to the traditional teaching) will significantly improve the accuracy with which optometry students code patient cases. The purpose of this study was to determine whether providing education about coding to third-year optometry student interns in a blended teaching format leads to improved coding accuracy for a set of standardized cases when compared to the traditional method. (The traditional method has typically involved roughly 2-3 hours of formal lecture combined with informal discussion at the conclusion of patient care.) Longevity of acquired knowledge was assessed approximately 8 weeks after the initial blended instruction, by asking subjects in this project to code a second set of standardized cases.

Methods

This study was conducted with approval from the Institutional Review Board Committee of the University of California – Berkeley (protocol 2013-07-5502). The design of the study began with the creation of 10 “standardized” patient cases that were subsequently used to assess the ability of students to assign E/M procedure codes properly. After the authors drafted these cases, they were reviewed by clinical faculty members to assess their completeness and their authenticity in representing actual patients. The draft cases were revised after this initial review and then pilot-tested on a second group of faculty members. After a final set of revisions, all 10 cases were sent to 3 expert external reviewers, who were asked to determine their E/M procedure codes independently. These reviewers, who donated their time and effort to the study, were nationally recognized optometric CPT coders, each of whom has more than 15 years of experience in medical coding. All have lectured and published extensively on medical coding for optometrists. In evaluating these 10 cases, the experts disagreed on the correct E/M procedure codes that should be assigned to 4 of them. In 3 of these, 2 out of the 3 experts provided the same code, which was subsequently used as the correct code for the purposes of the study. The fourth case (with a diagnosis of trichiasis) resulted in a three-way split among the experts and, as a result, it was eliminated from the study.

The study utilized third-year students at the University of California – Berkeley School of Optometry who had been exposed to the traditional curriculum. They had received previous instruction on coding delivered in the traditional format: approximately 3 hours of classroom lecture in a second-year pre-clinic course, and informal case-based instruction during a minority of approximately 50 primary care patient care encounters they experienced as clinical interns during summer semester following the second year and the initial part of the fall semester of the third year. In addition, at the beginning of the fall semester of the third year, all students received a one-page E/M coding flow-sheet, which was designed to help them determine the level of E/M code based on the standard Medicare rules. The sheet explained how to assign a code by first determining the level of the 3 major coding components (history, physical examination and medical decision-making), and then provided a guide to choosing the level of procedure code that should be used to bill a particular case.

To begin the study, all students completed a survey that utilized a Likert scale to indicate:

1. whether the students understood what an E/M code is

2. the level of confidence they had in determining the E/M code correctly

3. whether they believed knowledge of coding was important to their careers

4. the total extent of their prior education on E/M coding (within and outside of the optometry curriculum)

5. whether they believed their previous education was adequate to prepare them for a future job

6. the mode of practice to which they aspired after graduation.

After all students had answered the questions, they were randomly assigned to either the Control (N = 30) or Experimental Group (N = 29). Those in the Experimental Group were introduced to evaluation and management (medical) coding via a 1-hour online program that incorporated streaming video and a concurrent PowerPoint presentation. During this program, students were introduced to the different components of the E/M coding flow-sheet and then presented with 2 different teaching cases (diabetic retinopathy and dry eye). In both cases, the flow-sheet was used to guide students through the thought process involved in determining the E/M code. To gain more hands-on experience in E/M coding, the Experimental Group was then assigned to participate in a 15-minute, interactive, computer training program, where they were presented with sample written cases. One of these was a follow-up evaluation of a dry eye case that had been introduced earlier, during the 1-hour online program. The second was the evaluation and management of a patient with glaucoma. In this interactive exercise, the students were presented with a patient history, findings and assessment, and plan for each case. The students were then tasked with determining the E/M code. Because the final procedure code is based on the level of the patient history, physical examination and medical decision-making, students completed multiple-choice questions for each of these 3 components, selecting the answer they believed gave the appropriate coding level for each component. Upon choosing a level for a component, students were given immediate feedback. For example, if a question regarding a component level was answered incorrectly, they were presented with a message that indicated why the answer chosen was incorrect and referred to the E/M flow-sheet for additional guidance. Students were allowed to re-answer the question until the correct answer was selected. Once the question was answered correctly, they were able to proceed to the next component. The final multiple-choice question required students to select the correct overall E/M procedure code for the case. Time spent viewing the instructional video and performance on the multiple-choice questions during the interactive program were tracked to ensure students completed this component of the study before progressing to the next.

After the Experimental Group watched the video and practiced the interactive cases, the students in both the Experiment and Control groups were to assign a procedure code to each of the first 5 standardized cases. These cases were chosen to simulate typical patients encountered in an optometric setting that utilizes medical coding: glaucoma suspect, allergic conjunctivitis, cataracts, new onset of floaters (posterior vitreal detachment) and blepharitis. Students in both groups were allowed to use any resources they wished (other than consulting each other or faculty), including books, guides, the internet or the flow-sheet given to them at the beginning of the study, to help them determine the correct coding. This initial exercise was designed to establish whether exposure to the interactive training regimen described above resulted in members of the Experimental Group coding cases more correctly than members of the Control Group.

The second phase of the study was designed to assess the extent to which the knowledge the Experimental Group gained from the interactive online training was long-lasting. For this phase, 7-9 weeks after coding the first set of 5 standardized cases, students in both the Experimental and Control groups reviewed and provided E/M procedure codes for the remaining 4 standardized cases. These cases included follow-up for glaucoma, flap retinal tear, diabetic eye evaluation and dry age-related macular degeneration. All 4 cases were labeled as new or established, and the 5 possible CPT codes were provided as choices for selection. For example, codes 99201-99205 would be the possible choices in the case of new patients. Once again, students were allowed to consult the resources listed above to assist them in coding.

The difference in determining the correct E/M code between the Control and Experimental groups was tested by a Fisher exact test. A generalized linear model was then employed to quantitatively assess whether the blended learning treatment had a significant effect on students in the Experimental Group. The analysis utilized an “odds ratio” to allow comparison of the performance of students in the Experimental Group to those in the Control Group.18 An odds ratio is a measure of association between a treatment (in this case exposure to blending learning) and an outcome (accuracy of E/M coding). The odds ratio represents the odds that an outcome will occur given a particular treatment (Experimental Group) compared to the odds of the outcome occurring in the absence of that treatment (Control Group).

Results

Sixty-two third-year optometry students enrolled in a fall semester course on advanced clinical procedures were invited to participate in the study. Of those 62 students, 59 volunteered and 3 declined. During the testing, 6 students did not indicate an answer for either all 5 cases in the first survey or all 4 cases in the second survey. Because of this, their results were removed from the data analysis, which left 53 participants.

Because some students may have had additional experience either in clinic or from prior work experience that would put them at an advantage for E/M coding, a survey checking knowledge and attitudes regarding E/M coding was done to characterize students’ background. The survey questioned students on the number of hours of education received in coding, knowledge, confidence and whether they felt E/M coding was important to their careers.

Through a Chi Square test or Fisher exact test, it was found that at the onset of the study (before the Experimental Group received extra training), there was no difference between the Control and Experimental groups in terms of understanding what an E/M code is, confidence in coding, feeling that coding would be important in their future career, or the amount of education received prior to the study (P values = 0.29-0.41). (Table 1) Seven to nine weeks after the first E/M cases were completed, and immediately before the second set of E/M cases was given, the initial knowledge and attitudes survey was given again. In this instance, the Experimental Group had been shown the online lecture material and there were significant differences between the two groups in terms of understanding what an E/M code is (P value = 0.04), having received training (P value = 0.004) and feeling that the education received was adequate (P value = 0.03). In terms of the other factors (confidence in coding and recognizing the importance of coding), there were no differences between the Experimental and Control groups.

One to two weeks after the Experimental Group viewed the video, all participants reviewed and then indicated the E/M procedure code for the first set of 5 standardized cases. When the results for all 5 cases were tabulated, it was found that 92% (24 of 26 participants) of the Experimental Group coded the cases correctly > 50% of the time. In comparison, in the Control Group 58% (14 of 24 participants) coded the same cases correctly > 50% of the time. According to the generalized linear mixed model of this data, the Experimental Group (who watched the instructive video and completed the interactive program) had an odds ratio of 9.55 (95% confidence interval 2.05-72.52), indicating the training program had a positive effect on coding accuracy. (Table 2)

Seven to nine weeks after both groups evaluated the initial set of cases, a second set of E/M coding cases was administered to both groups. The second survey found that 59% (16 of 27 participants) of the Experimental Group answered the cases correctly > 50% and the time. In comparison, 31% (8 of 26 participants) of the Control Group answered the same cases correctly > 50% of the time. At this point in the study, the generalized linear mixed model of the data showed the Experimental Group (who watched the instructive video and the interactive program) had an odds ratio of 3.23 (95% confidence interval 1.05-10.68). (Table 3) This indicates that, while the training program initially had a positive effect on coding accuracy, after 7-9 weeks this effect was greatly reduced. Both in the initial and second set of coding cases, neither gender nor age was significant in the responses of students to the coding practice.

Discussion

Traditionally, optometric education has focused on teaching clinical care of patients, with very little time being allocated to educating students about the role of proper documentation and determining the correct E/M procedure code. As optometry students enter the workforce, one of the responsibilities they will face is E/M coding. Good E/M coding ability can be critical to success, and poor E/M coding can result in serious legal and financial consequences. The purpose of the study was to evaluate a new technique for teaching E/M coding and then determine whether this technique led to improved accuracy.

Determination of the correct code for standardized cases

The E/M coding system is complex, and coding can be challenging for novice and experienced clinicians alike. In this study we attempted to ensure the correct coding for each standardized case by utilizing 3 optometric coding experts to determine the code. The experts agreed on the E/M code for 6 of the 10 cases. Interestingly, in 4 of the 10 cases there was disagreement, although the chosen E/M codes were typically very close (e.g., one expert chose 99212 while another chose 99213). It seems likely that disagreement over E/M codes arose because there was a certain amount of interpretation about the complexity of the case and/or the prognosis for the condition — both of which can affect the final level of E/M code. In the cases where there was disagreement, the final code was determined by choosing the code that the majority of experts agreed upon. Unfortunately, in one of the cases, there was complete disagreement between the experts and this clinical case was eliminated from the results pool. The lack of consensus between the CPT coding experts in the study was initially troubling; however, other studies have shown that this type of disparity is common. One group11 found agreement among CPT experts to range from 50% to 71%. This lack of consensus among experts is a complicating factor in teaching students to code correctly and a challenge of coding for practitioners who want to be confident about the accuracy of their billing.

Techniques for teaching coding

What is the best way to teach E/M coding? We are aware of 3 previous studies that examined teaching techniques that led to improvements in coding ability. In one, an instrument consisting of a flow-sheet with a concise set of notes for quick reference was used to determine a final code based on the standard Medicare rules for billing levels. 19 The investigators concluded that the flow-sheet was a reliable tool for correctly assessing coding. In a second study,7 clinical cases that had been managed by residents were reviewed in a problem-based teaching format and used to learn coding basics, review previous coding assessments and reinforce proper coding by pointing out errors made by the residents. The authors reported that residents demonstrated an increase in accuracy of coding and a decline in under-coding. In a third study,20 subjects were provided with a single, 90-minute session taught by a coding specialist. The session was presented to residents who had been coding, and the results showed that even a single informational session improved coding ability for inexperienced coders. Unfortunately, this study did not show significant improved coding ability in the more senior residents who were also experienced coders. While all of the above studies show an improvement in coding accuracy in novice clinicians, each was limited by a relatively small number of participants (11 to 20), and none provided follow-up to determine whether learned E/M coding concepts were retained over time.

The technique employed to teach coding in this study used 2 approaches: video and a brief interactive program that walked students through an E/M flow-sheet. Results from the study provide evidence that a blended format of teaching does lead to improved coding ability, with the treatment effect being 9.55 (95% confidence level 2.05-72.52; P = 0.01) within 2 weeks of watching the video. This initial improvement in coding ability did however decrease to 3.23 (95% confidence interval 1.05-10.68; P value = 0.01) 7-9 weeks after the training session.

Student perceptions regarding prior education and importance of coding

Although this is the first study involving optometry student clinicians, results of the survey are in agreement with previously published reports of other healthcare profession trainees’ attitudes towards the importance of coding. Surveys of residents in surgery and emergency and internal medicine show that most believe the amount of training for E/M coding is inadequate, yet the overwhelming majority (~90% or more) feel that coding ability is important.2-5 The present survey of optometry students shows a similar result: 98% (52 of 53) agreed that coding will be important to their future careers and 71% (37 of 53) agreed that the education they had received regarding coding was not adequate. Studying a 1-hour video and 2 practice cases was enough to make students feel more knowledgeable about what an E/M code was (P value = 0.04) and that they had received adequate education in coding to prepare them for a future job (P value = 0.03). Compared to the Control Group, their confidence in E/M coding also had improved, but this result was not statistically significant (P value = 0.11).

Limitations of the current study

A limitation of this study is that only 7-9 weeks elapsed between when the students were exposed to the training video and cases and when they assessed the second set of cases, which were designed to examine the permanence of their new knowledge. However, even with this short interval, the data reveal a decrease in performance, suggesting that without additional training at regular intervals the significant gains acquired during the initial training may disappear. Future studies should test knowledge at longer intervals (e.g., 6, 12 and 18 months after the initial videos and exercise) to examine the rate at which the skills gained in E/M coding decline to pre-training levels. It seems likely that, while the use of interactive video and hands-on practice may be a good first step for teaching the basics of E/M coding, this new knowledge has to be reinforced in the clinical setting for it to become permanent.

Conclusion

Optometry students believe that learning coding is very important and that the current level of their education in this area is inadequate. A blended format of instruction in coding techniques is effective in improving these important skills in third-year optometry students. An advantage of the blended format is that it can be utilized throughout the optometry curriculum, even when fourth-year optometry students are away from their home institutions on external rotations. Some of the initial beneficial effects of this training early in the third professional year decreased over time. However, in addition to providing initial instruction, online interactive training could be an effective and practical method for reinforcing and consolidating this new knowledge if it was offered at regular intervals during the remainder of the professional curriculum.

Acknowledgements

The research for this paper was supported by a Starter Grant for Educational Research from the Association of Schools and Colleges of Optometry (M. Whiteside) with funding provided by Vistakon, division of Johnson & Johnson Vision Care, Inc., and an NIH Center Core Grant (P30EY003176; Richard Kramer, PI). The authors are very grateful to Drs. Chuck Brownlow, John McGreal and John Rumpakis, who kindly served as CPT coding experts. In addition, materials used in this study were donated by Primary Eyecare Network (PEN).

References

1. American Optometric Association Research & Information Center Executive Summary, Income from Optometry from the 2013 Survey of Optometric Practice March 2015. [cited 2015 Oct 17]. Available from: https://www.aoa.org/Documents/ric/2014%20Income%20From%20Optometry%20Executive%20Summary%20FINAL%20073015.pdf.

2. Adiga K, Buss M, Beasley BW. Perceived, actual and desired knowledge regarding Medicare billing and reimbursement: a national needs assessment survey of internal medicine residents. J Gen Intern Med. 2006;21(5):466-470.

3. Pines JM, Braithwaite S. Documentation and coding education in emergency medicine residency programs: a national survey of residents and program directors. Cal J Emerg Med. 2004;5(1):3-8.

4. Dawson B, Carter K, Brewer K, Lawson L. Chart smart: A need for documentation and billing education among emergency medicine residents? West J Emerg Med. 2010;11(2):116-119.

5. Fakhry SM, Robinson L, Hendershot K, et al. Surgical residents’ knowledge of documentation and coding for professional services: an opportunity for a focused educational offering. Am J Surg. 2007 Aug;194(2):268-9.

6. Moskowitz EJ, Nash DB. Accreditation Council for Graduate Medical Education Competencies: Practice-Based Learning and Systems-Based Practice. Am J Med Qual. 2007 Sep-Oct;22(5):351-82.

7. As-Sanie S, Zolnoun D, Wechter ME, et al. Teaching residents coding and documentation: effectiveness of a problem-oriented approach. Am J Obstet Gynecol. Nov;193(5):1790-3.

8. Sprtel SJ, Zlabek JA. Does the use of standardized history and physical forms improve billable income and resident physician awareness of billing codes? South Med J. 2005; May 98(5):524-7.

9. Brett AS. New guidelines for coding physicians services – a step backward. N Engl J Med. 1998 Dec 3;339(23):1705-8.

10. King MS, Sharp L, Lipsky MS. Accuracy of CPT evaluation and management coding by family physicians. J Am Board Fam Pract. 2001 May-Jun;14(3):184-192.

11. King MS, Lipsky MS, Sharp L. Expert agreement in current procedural terminology evaluation and management coding. Arch Intern Med. 2002 Feb 11;162(3):316-20.

12. Kassierer JP, Angell M. Evaluation and management guidelines – fatally flawed. N Engl J Med. 1998 Dec 3;339(23):1697-8.

13. McLean SA. The impact of changes in HCFA documentation requirements on academic emergency medicine: results of a physician survey. Acad Emerg Med. 2001;8(9):880-885.

14. McConville JF, Rubin DT, Humphrey H, Carson SS. Effects of billing and documentation requirements on the quantity and quality of teaching by attending physicians. Academic Medicine. 2001 Nov;76(11):1144-7.

15. Horner RD, Paris JA, Purvis JR, Lawler FH. Accuracy of patient encounter and billing information in ambulatory care. J Fam Pract.1991 Dec;33(6):593-8.

16. Duszak R, Blackham W, Kusiak G, Majchrzak J. CPT coding by interventional radiologists: a multi-institutional evaluation of accuracy and its economic implications. J Am Coll Radiol. 2004 Oct;1(10):734-740.

17. False Claims Act (31 U.S. Code § 3729) [cited 2015 Oct 17]. Available from: https://www.gpo.gov/fdsys/pkg/USCODE-2011-title31/pdf/USCODE-2011-title31-subtitleIII-chap37-subchapIII-sec3729.pdf.

18. Szumilas M. Explaining odds ratios. J Can Acad Child Adolesc Psychiatry. 2010 Aug;19(3):227-229.

19. Kapa S, Beckman TJ, Cha SS, et al. A reliable billing method for internal medicine resident clinics: financial implications for an academic medical center. J Grad Med Educ. 2010 Jun;2(2):181-7.

20. Benke JR, Lin SY, Ishman SL. Directed educational training improves coding and billing skills for residents. Int J Pediatr Otorhinolaryngol. 2013 Mar;77(3):399-401.