PEER REVIEWED

Does it Make the Grade? Clinical Grading in an Optometric Program

Marc B. Taub, OD, MS, EdD

Abstract

Background: In this naturalistic and formative evaluation, the main research question of the study related to the effectiveness of the clinical grading system as a method of grading and teaching at Southern College of Optometry (SCO). Sub-questions included how the system impacts student learning and performance, and whether it meets the needs of students, faculty, and administration. The Experiential Learning Theory, which involves a four-stage learning cycle of experience, reflection, conceptualization, and experimentation, was used to view clinical grading as an opportunity for reflection and investigated whether the grading system was being used for that purpose.

Methods: Three administrators were interviewed and focus groups were conducted with both faculty and student with six participants each. Thematic analysis was used to code the qualitative data.

Results: Five themes developed: (1) Faculty expectations develop with experience, are highly personal, and have an impact on learning; (2) Faculty feedback can have a positive or negative impact on student learning; (3) The clinical grading system is used in a variety of ways and for different reasons by the faculty, administrators, and students; (4) Clinical grading is subjective and has challenges that inhibit its effective use; and (5) The clinical grading system continues to evolve and grow to meet the needs of all parties.

Conclusion: The current clinical grading system at SCO is partially effective for grading and teaching but has barriers that hamper student reflection. It has a variable impact on shaping student learning and performance based on how it is being used by both faculty and students. The grading system mostly meets the needs of the various stakeholders, but recommendations that are both specific to SCO and more broadly, to optometric education are presented.

Key Words: Clinical grading, clinical optometry, experiential learning theory, feedback, reflection

Background of the Study

The ultimate goal of any program is to produce competent practitioners; optometry programs are no different. Since the four-year curriculum is split between didactic and clinical experiences, different assessment and grading methods are required. In clinical courses, grading protocols are specific to the optometric program. In discussions with residents and colleagues from various institutions over the past 20 years, it became evident that the programs generally attempt to assess the same types of information, they do so in very different manners. Some programs use Likert grading scales, others use written comments and others use a combination of the two. The frequency of grading and feedback also occurs at various times, depending on the program. There is a lack of uniformity on many fronts and, hence, a lack of best clinical grading practices in optometric programs.

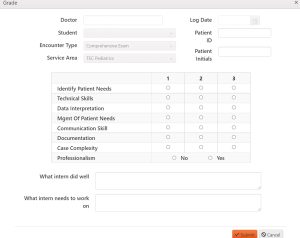

A grade is completed for each patient encounter at Southern College of Optometry (SCO). After the student sees a patient, they log the encounter and a request for an evaluation or grade is sent to the faculty member with whom they worked with for that patient encounter. The faculty member is then responsible for completing the grade, which is sent back to the student. The clinical grading presented below is used throughout the student’s third and fourth years and is the same rubric, regardless of the clinical service in which the patient care takes place. There are two components to each grade (Figure 1). The first component consists of six categories, with a Likert scale of 1 to 3 to indicate performance. The faculty member also indicates whether the student was professional during the examination in a yes/no format. The second aspect of the grade consists of two text boxes where the faculty can speak directly to the student, letting them know precisely what they have done well and what needs improvement.

Figure 1. Example of the Grading Form Completed by the Faculty Member at SCO. Click to enlarge

There are issues inherent with this system employed by SCO. There is guidance provided within the grading program, in the form of pop-up boxes, as to what each of the categories includes, however, there is no indication of what constitutes a grade of 1, 2, or 3. This decision-making is left solely up to the faculty member. There is a wide disparity in average grade and standard deviation in examining the database related to grading by faculty member. Some faculty grade high and have a tight standard deviation; these are easier graders who poorly discriminate between excellent, acceptable and poor performance. Others tend to grade lower and have larger standard deviations, perhaps indicating that they are better discriminators of performance. This is based on their expectations for the student at that point in their careers and the difficulty/type of patient encounter. The administration offers little guidance on how to grade; this is purposeful and aims to promote academic freedom and decision making on the part of the faculty.

Even though the clinical grading system is consistent among the different clinical services, and the grading rubric remains unchanged, what constitutes a 1, 2, or 3 can also differ based on the clinical service in which the patient is seen. This is related to the skills needed to provide the care and the demands of the faculty in those services.

The entire system of grading at SCO has not been evaluated from both a top-down and bottom-up approach since being created more than 20 years ago. A grading committee is convened every 2 years, but only small alterations are made to the grading matrix. While this allows for fixes on a micro-level, it does not address macro-level issues and concerns. In this study, through interviews with administration and focus groups with students and faculty, my hope is to create an understanding of how the grading system is being used by the various parties and whether it is effective when examined through the lens of the experiential model. This understanding may lead to the creation of a set of best practices for other colleges to mirror.

Theoretical Framework

Experiential Learning

Experiential Learning Theory originates in the work of educator John Dewey. Dewey1 espoused the concept that learning occurs during and from experience. He states in his article, “My Pedagogic Creed” from 1897, “…education must be conceived as a continuing reconstruction of experience…the process and goal of education are one and the same thing.”2 This became the foundation for what is now known as progressive education. According to Dewey,1 not all experiences produce learning; some may miseducate, leading to less-than-meaningful experiences where learning or growth does not occur. For learning to occur, the learner must connect with the experience and the experience must be genuine.3

Kurt Lewin, an American psychologist, had a profound influence in the fields of social psychology and organizational behavior. The T-Group laboratory method and action research they conceived is a four-stage cycle in which “learning, change, and growth are seen to be facilitated best by an integrated process that begins with here-and-now experience followed by collection of data and observations about that experience.” The data is analyzed and used to modify behavior and the choice of new experiences. The concrete experience and feedback processes were key factors in this model.4

Jean Piaget, a Swiss psychologist, is a well-known in the field of child and cognitive development. He identified four major stages of cognitive growth that begin at birth and continue to about ages 14 to 16. The sensory-motor stage occurs with learning occurring through touch in the environment. In the representational stage, the child develops reflective orientation, transferring the internalized actions to images to be manipulated. In the stage of concrete operations, the child uses their powers of induction, relying on concepts and theories to give shape to their experiences. In the stage of formal learning which takes place during adolescence, the child returns to active orientation, but with the ability to engage in deduction and reasoning. Now, they can develop theories and test their validity. These concepts can be extrapolated to the general learning process during adulthood.4

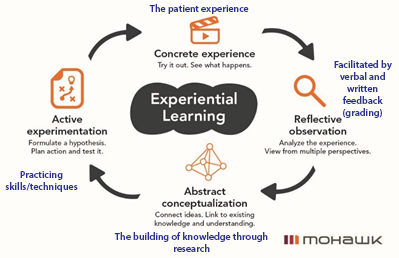

Drawing upon the works of Dewey,1 Piaget,5 and Lewin,6 Kolb7 defined experiential learning as the process whereby knowledge is created through the transformation of experience. The Kolb learning model consists of four stages through which learners progress during the learning process: concrete experience, reflective observation, abstract conceptualization and active experimentation. Learning is effective when there is a progression by the learner through these four stages. The model is more complicated than a circular cycle, in any case. It is composed of two modes of grasping experiences (concrete experience and abstract conceptualization) that are oppositional and two modes of transforming experiences (reflective observation and active experimentation) that are also oppositional.

The learning cycle is conceptualized as the individual starting the process by having a concrete experience, which is the basis for observations and reflection. The reflections are integrated into abstract concepts from which implications can be drawn and actively tested and experimented in future experience. Zull provided a connection between Kolb’s cycle and neurology.8 In viewing the four stages of the Kolb experiential learning cycle concerning the clinical optometric learning experience, the following connections emerge as seen in blue in Figure 2.

Figure 2. Kolb’s Experiential Learning Theory Modified to Include Clinical Grading (“Experiential Learning,” n.d.). Click to enlarge

In looking more closely at the reflection component of the process, the question arises as to whether clinical grading offers the opportunity for reflection to take place at a deep enough level.

The true purpose of this study is education. Optometrists, who are also educators, strive to produce the best-trained optometrists. Clinical grading, in theory, should support the notion that the role of the teacher is to support learning in order to guide the student in having meaningful experiences. Yes, it has a purpose in assigning grades of pass and fail, but whether the clinical grading system at SCO is fulfilling the first mission is a major question. Not only was this study concerned with the effectiveness of our clinical grading, but it also explored how that grading influences the learning process and overall student education.

Research Questions

These are the research questions directing this study:

- How effective is the current clinical grading system as a method of grading and teaching at the Southern College of Optometry (SCO)?

- How does the clinical grading system at SCO shape student learning and performance?

- How does the current system used for clinical grading at SCO meet the needs of students, faculty, administration and Accreditation Council on Optometric Education (ACOE)?

Research Design

In this naturalistic and formative evaluation study, data collection consisted of two methods, interviews and focus groups, to gather information from a variety of sources: students, faculty and administration. Using a semi-structured interview process, three members of the SCO administrative team who played a role in establishing the clinical grading paradigm currently in use were interviewed. The interview questions for this group are representative of the types of questions asked for all groups and can be found in Appendix A. The second group studied was the clinical faculty as they provide the grades in the clinical grading system. This group of six faculty was studied through one focus group and chosen by purposeful sampling,9 based on the length of time teaching at SCO and specific area of expertise. The third group, who took part as a different focus group, was made up of the students. In the clinical programs, 3rd- and 4th-year students see patients and submit evaluations (which will later be graded) for each clinical encounter to the faculty. Three students were included in the focus group for each, for a total of six students who participated. Students were selected based time of response to an email request for participation to both the third- and fourth-year classes; those who responded first were chosen. Administrators taking part are identified as A1-A3. Faculty are identified as F1-F6 and students are identified as S1-S6. All interviews and focus groups took place via Microsoft TEAMS (Redmond, WA) online instead of in-person in accordance with the COVID protocols at the time. This study was deemed exempt by the IRB at the University of Memphis and SCO and was completed in compliance with the Helsinki Accords. Informed consent was completed by each subject electronically.

The most appropriate method of analysis to understand the data in this study was thematic analysis. Thematic analysis involves the identification of recurring patterns that are presented as overarching statements or themes.10 Braun and Clark11 define thematic analysis as “a method for identifying, analyzing, and reporting patterns (themes) within data”.

Findings

Five themes, each with multiple subthemes were identified (Table 1) and described in more detail below.

Table 1. Summary of Themes and Subthemes Developed in this Study. Click to enlarge

Theme 1: Faculty expectations develop with experience, are highly personal and have an impact on learning

In the interviews and focus groups, participants from all three groups in the study continually spoke about faculty expectations of student performance and how those expectations impacted use of the clinical grading system. Faculty grading is very much a “moving target” based on numerous factors including their experience in patient care and teaching. Since every faculty has a different path of development, their expectations are personal. A1, a 30+ year faculty member describes his process of how his expectations of student performance matured:

You know, it was unconscious because I can’t provide you with a rational step of how I developed it [his expectations of student performance]. I have obviously been doing this [clinical grading] very long time. I’d spent virtually all of my time in clinical teaching, and so really it was built up over years. (A1, Para. 43)

In contrast, F5, who has been teaching for three years, highlighted the need for experience and how that impacts the grading process in the following statement: “You just need a year or two of working in the clinic to see what is truly, you know, what’s typical for a student, what’s atypical for a student” (F5, Para. 154).

Many of the faculty personalized the concept of expectations, making comments like, “perform higher than what I would have expected” (F4, Para. 61), “being outside of my expectations,” (F4, Para. 58), “where I think they should fall” (F6, Para. 61), and “I expect them” (F2, Para. 65). A2 also made a similar comment: “below the level at which I would expect them to be performing” (A2, Para. 25).

The expectations can change based on service specialty service in which the patient is seen, the student’s level of experience, and the complexity of the patient, causing issues in how the students use the grading system and their ability to learn from its use. Comments such as “I’ve learned what they expect for me” (S1, Para. 29) and “I do feel like I kind of tailor my [patient eye] exams to who I’m working with in a way” (S1, Para. 98), highlight this issue.

The administrators highlighted that “not everybody [referring to faculty] was using this [clinical grading] system appropriately” (A2, Para. 34). For example, A2 brought up how some faculty were unable to differentiate between good versus bad student performance and “would give the exact same grade hundreds of times in a row” (A2, Para. 34), which is obviously not reflective of student performance, since even one student does not perform in the same manner with different patients.

Theme 2: Faculty feedback can have a positive or negative impact on student learning

Faculty feedback having a positive impact on student learning centered around two main topics: building student confidence and faculty feedback. The concept of building student confidence came up in a number of quotes from the students and how the feedback they received within the grading system helped in creating and building that confidence. S3 related the concept of confidence and how the grading system helped build her confidence in this quote, showing the importance of the concept in her education.

My goal out of the grading system, it’s like giving me that confidence. Tell me what I need to grow on and if I do something wrong, yeah, I’d like to know definitely. But in a constructive criticism way rather than harsh. (S3, Para. 184)

These student comments show the impact on learning that can occur in giving feedback through the clinical grading system. F1, an almost 30-year faculty member hits on confidence and the importance of developing it from the teaching side of the equation. This individual uses the grading system with building confidence as a specific goal and makes comments that assists the students in creating the mental scaffolding to be excellent clinicians. “I think that confidence is built when you reaffirm that they’re doing proper behaviors and really stepping outside the norm and identifying themselves as an exceptional individual” (F1, Para. 105). The building of confidence is evident and shows the positive impact on learning that is possible in the use of the clinical grading system at SCO.

The students had thoughts on how the feedback made them feel, the types of feedback that they received and appreciated, as well as the impact of the feedback on their desire to learn and interact with the faculty. S3, a 4th-year student, highlighted the level of detail in the feedback that they prefer and how they learned to view the feedback through a learning lens. While feedback that is critical in nature can be seen as negative by students, this student sees them as a growth opportunity.

So, if they see maybe something they need work on just like this whole broad situation where it’s like I can pinpoint like, OK, in this particular case you can work on this. So, I guess seeing it narrowed down to where I need to learn and looking at it. And then I guess it comes into looking at it as a learning opportunity. (S3, Para. 131)

Looking at grades, both bad and good, as a learning opportunity and not punitive in nature is important for student development as clinicians. Looking at grades as a learning or growth opportunity, shows that the students do in fact understand the point of grades as being for development.

As part of the use of the text boxes, many faculty take the opportunity to disseminate information to illustrate certain learning points or resources for the student to continue their growth. S4 not only talks about the giving of the resources for the student to explore, he connects them with the opportunity to learn and grow as a clinician: “They also include some articles or some publications that are relevant to that case to help you reach that growth.” (S4, Para. 53)

While the goal of the feedback provided as part of the clinical grading system is hoped to have a positive impact on student learning, unfortunately, that is not always the case. In discussing the types of feedback that the students like and that is helpful in the learning process, they also were honest about what manner of feedback was not beneficial and even detracted from their use of the clinical grading system. Also included was the impression that the timing of the grade completion influenced the potential impact on learning and actually had the potential to become negative in nature.

Student observations emphasize the lack of detailed comments from faculty limiting their ability to grow and learn from the clinical experiences. “I’ve had multiple encounters where the doctor writes in that ‘need to work on’ box, LOL (laugh out loud) I have to write something here or there has to be words here -that’s happened multiple times” (S6, Para. 73). This poor level of feedback seems to halt the reflective aspect of the experiential learning cycle for students.

S2’s comment takes the poor feedback quality a step further and shows his frustration since they feel that there is always something that he can learn from every patient he examines. They make the leap that perhaps the lack of comments is a sign that the faculty are not spending an appropriate amount of time in the grading process:

So, when you get a staff doctor that doesn’t give you anything in that section, it’s almost, I almost kind of look at it as all the like, they’re not spending too much time working on this feedback for this encounter. (S2, Para. 24)

Other comments highlight that some of the faculty are not creative and use the same feedback over and over. Several students felt that those faculty copy and paste their phrases that are general in nature, limiting the growth opportunities; “But there are also some docs that have pre-done little things that they copy and paste into your ‘what they like’ section” (S5, Para. 8). S4, a 4th-year student, responded with “Great job. What needs work on…nothing” (S4, Para. 69) when questioned about what types of comments are not helpful. Without feedback that enables reflection, there once again is a break in the experiential learning cycle.

Faculty are given leeway to complete grades within a few days. They are encouraged to do this as quickly as possible, but it does not always happen as requested as evidenced in the following student statements. The comment from A1 shows the variability in how long grades take for completion, “Sometimes before you’re even done, they’ll grade it. Sometimes it’s like a week later” (S1, Para. 8). S6 made two comments during the focus group, “a week later when they’re grading me” (S6, Para. 106) and “it might take them a week to put it in my grade” (S6, Para. 47). The impact of the lack of timely feedback directly relates to the ability to learn from those encounters. Having immediate feedback when the exam experience is fresh is quite different than getting that same feedback a week later after that student has seen a number of additional patients and they might not recall the details of the patient encounter. Grading that takes an extended period of time is not well-received by the students and has the opportunity to foster negative experiences and learning.

Theme 3: The clinical grading system is used in a variety of ways and for different reasons by the faculty, administrators and students

The administrators use the grading system to assess and document student performance for both legal reasons, in case a student failure is contested, and to ensure they are graduating well-rounded students who can gain licensure in all 50 states. The grading system enables the administrators to assess faculty grading ability by tabulating grading statistics such as average and standard deviation for each faculty member. For accreditation purposes, the grading system serves a dual role in of patients the students see by year/semester and type (age, gender, ocular condition, etc.).

Accreditation needs are relatively straightforward, but the exact construction of a grading system is left to the institution. A3 elaborates how SCO meets the needs of ACOE and used ACOE requirements as a base on which to build the SCO system.

We have to demonstrate that we are assessing the individual’s [student] knowledge and skill in certain areas and we do that based on ACOE, and graduate attributes that are internal graduate attributes that have been created using ACOE as a starting point. (A3, Para. 57)

Part of assessing progress is related to accreditation as highlighted above but there is something deeper in terms of patient care and the college needing to attest to the public that this doctor is safe and capable of treating patients. We need to check off the accreditation box, but we must also use the grading system to ensure a well-rounded and safe clinician.

And yes, we’re saying that they [students] satisfy the requirements of our educational program…but…we’re also giving our acknowledgement that we believe they’re safe to independently see real human beings in the care of their eyes. (A2, Para. 26)

As an offshoot of attesting that the student is safe to practice and treat patients, the administration also uses the tracking aspect of the system to make sure that students see a variety of patients, so that they can treat the widest array of conditions and be well-rounded. A2 states, “It allows us to track clinical experience, also, at a moment’s notice that keeps time so that we could, if we needed to, redirect certain patient types of certain students.” (Para. 63)

Having a record of student progress is crucial but almost as important is “having defensible records for grades that are assigned, documentation” (A3, Para. 57). There have been instances over the years of students failing a clinical course or the entire program and challenging the grades legally.

In terms of assessing faculty grading ability, administration noted that some faculty were not using the grading system effectively as mentioned in Theme 1. Since feedback and assessment is an important part of a faculty member’s job description, it was decided that the system could be used “to evaluate the effectiveness of the clinician who’s doing the grading” (A3, Para. 57) and “could be part of our [SCO’s] yearly review” (A2, Para. 34). By using the grading system in this manner, it provides not only an assessment of student clinical performance, but also faculty grading functions.

The faculty at SCO is diverse in years spent teaching, specialty areas of interest and background, but they were consistent in the reasons they gave for grading clinically. The most common answer centered around feedback on what the student did well and on what that they need to improve. The faculty also use the grading system to document student performance and to share information/resources with the students. This was highlighted by F3 who stated, “giving students direct feedback about patient encounters” (F3, Para. 8), F2 who stated, “[grading] gives consistent feedback on every encounter” (F2, Para. 143), and F4 who stated, “most of the time it’s just feedback, so they know how they’re doing” (F4, Para. 9).

Beyond student feedback, F5 identifies the need for documentation of performance as an important aspect of grading related to ensuring adequate performance. This indicates that the faculty is aware of the grading system perhaps being used in a legal nature. He stated, grading is a way “to help the student identify when they’re not performing the way they should be and to properly document if the student is consistently not performing where they should be and it potentially warrants further intervention” (F5, Para. 4).

“Sharing information” (F5, Para. 4) was also a common reason provided by several faculty. F2 connected the dots between providing that type of feedback and the potential impact on learning: “Additional things or pearls that I … try to give them those little pearls that might help him next time or just things from experience that that maybe we didn’t talk about during the encounter” (F2, Para. 10).

Feedback is a crucial aspect of what the students want out of the clinical grading system. They take the concept of the feedback in the grading system and up it a level by connecting it to its impact on learning. S2 highlighted correcting his actions to best serve the needs of the patient based on faculty feedback: “To know that the things that I’m doing in clinic are the correct things are the right things and I’m doing them in a way that’s effective and working for that specific patient” (S2, Para. 24). S3 internalizes the feedback and relishes the opportunity to improve her performance but also links this growth to the need for independence once they graduate, stating, “it is great for you to improve, and that’s almost the way you have to look at it. It’s kind of like they just want you to be better and you’re about to be on your own” (S3, Para. 14).

There is progression in how the students use the grading system as they move throughout their clinical careers. The 4th-years in the focus group, who returned for their final semester of schooling at the clinic spoke about how they currently used the system and gave some contrast to their use in third year.

I did read more of what they wrote my third year when I was more anxious about what I was doing and how I was. But now I’m more confident in who I am as a doctor. (S5, Para. 31)

S5 continued and discussed how they went from looking for specific faculty feedback to looking for more general feedback as they grew in confidence in the 4th year after they returned from off-campus externships.

So, I did that for about the first 2 or 3 weeks coming back into the clinical setting here, but now I am more comfortable with what the doctors want, so I go look, oh, they put the grade and it’s met, everything is probably fine, so I did check it a little more at the beginning. (S5, Para. 49)

Similar to the administrators, the students also use clinical grading to track their patient encounter numbers and the types of patients they have seen. In tracking needs, as it is for the administrators, it is to ensure that a wide variety of patients are being seen. S3 focuses on the number of encounters and types of patients.

Uh, definitely for patient tracking. I think it like catches two birds with one stone. You type in all their demographics, so we have this excel sheet we can export at the end that lets us know how many patient encounters we’ve had. (S3, Para. 18)

S4 adds in the fact that knowing the types of eye conditions being seen is also an important component of the tracking aspect of the grading system, in saying, “being able to kind of keep track of how many people I’m seeing and kind of what I’m seeing as well” (S4, Para. 22).

Theme 4: Clinical grading is subjective and has challenges that inhibit effective use

Clinical grading is subjective in nature. F1 sums up this concept in this statement about clinical grading on a global level: “I think all assessment systems have flaws, particularly when you’re dealing with subjective determinations of performance” (F1, Para. 185). They focus directly on the issue of subjectivity. This sentiment was repeated several times by both faculty and administrators: “I think to a certain extent it is subjective” (F5, Para. 154), “There’s a lot of soft skills and those are very subjective and difficult to interpret” (A2, Para. 6), and “The big challenge with clinical grading is that it is pretty much 100% subjective” (A1, Para. 7). The most important takeaway from these statements is the acknowledgement from both the faculty and the administrators that grading is subjective. When it is accepted that there is inherent bias in some form or another in the clinical grading process, only then an approach correcting for that bias be addressed. A3 points out that attempts have been made toward standardization, and with the help of technology, they feel that things have improved, and they are satisfied with it.

I think our system is a good system and it’s much better than what we used when I first got here. I mean, it was so very subjective back then. It’s still subjective but given the assistance of automation, of technology to try to standardize, what we’re looking at, I think I, I mean, I’ve been very satisfied with the system. (A3, Para. 163)

Even still, there is still the sentiment that faculty struggle with certain aspects of grading such as making meaningful comments and trouble with discernment in performance, which has been covered previously.

The average student to faculty ratio in the clinical programs at SCO is 4 to 1. This means that there can be four patient exams going on at the same time with four different students and a single faculty supervisor. Therefore, there is literally no way for the faculty to be 100% involved in a student’s examination and in some cases, the faculty–student interaction is quite limited based on the student and difficulty of the patient. A2 highlights not only the fact that the faculty is not in the room for the entire encounter but the difficulty that poses from the doctor’s standpoint, “I also understand that we’re not in the exam lane watching everything live. We’re interpreting what’s going on” (A2, Para. 69). S2 shows the impact of the faculty not being present in the room for the entire encounter on the usefulness of the grading system.

They’re [faculty] not with you the whole encounter, they’re only in there for the very end, so to just kind of get a boost [from faculty that] … what you’re doing throughout the majority of the encounter is the right thing is not very helpful. (S2, Para. 25)

This begs the question of how the grade can be accurate and appropriate if the faculty is not observing much of the testing, patient interaction and communication between the student and the patient when most of the graded quantitative categories require some aspect of student/faculty interaction. The faculty must rely on their experience in patient care and teaching to know when testing needs to be repeated and when to trust the student’s results.

The faculty at SCO are teachers. They want the students to perform at a high level and be the best doctors. The faculty are also human and have emotions. Telling a student that they are lacking in certain skills, have poor communication, or interpreted test results incorrectly does not come easily for some. Giving negative feedback or constructive criticism, from an emotional standpoint, can be painful for the faculty and difficult for the students to hear. It can also be uncomfortable and strain the student/faculty relationship if not done with compassion and empathy and interpreted by the student as the faculty trying to teach and be helpful. The future interaction possibilities between faculty and students can weigh heavily on the faculty member doing the grading and perhaps could lead them to not be as honest and tough as needed as highlighted by A1: “Many faculty are reluctant to even point out negative things to students for fear of having to deal with the student face to face the next day in the clinic or the next week in the clinic” (A1, Para. 48).

Prior to starting in the clinical programs, all students undergo an orientation to the physical building as well as the clinical processes and policies, including how the grading system works. This is done in a large lecture style format. Faculty are also provided an orientation, which is part of a larger orientation to the college. Since faculty hiring is sporadic, the orientation may be as part of a small group or solo. A prominent subtheme is the perceived lack of a proper orientation and guidance in grading provided to students and faculty. This was addressed by the students, faculty and an administrator. F2, a relatively new faculty member describes how he learned about the grading system and the frustration felt: “I felt a little just thrown in…they didn’t give me really any much insight other than me just watching them interact with students” (F2, Para. 150).

The lack of a formal orientation and inadequate guidance is highlighted by both F1, a long-time faculty member that has administrative experience, stating, “I think the thing I don’t like about it [grading] is this a lack of comfort that faculty have, the lack of guidance that they get” (F1, Para. 181). A3, a long-time administrator who has faculty experience, corroborates that sentiment saying, “We have never provided consistent support to the graders. We don’t provide an orientation on how the system functions to new faculty. We don’t give them an overview of clinical grading” (A3, Para. 43).

From the student side of the grading equation, these problems of poor understanding due to inadequate orientation exist as well. They highlight the lack of what they consider to be an adequate orientation, which explains not only how to use physically use the clinical grading system but what the various levels of 1, 2, and 3 actually mean and how they need to perform to be at those different levels. S4 highlighted the issue of a learning curve, which can take quite some time, in understanding the clinical grading system and what the various levels on the scale actually mean in the following statement:

The 1, 2, 3 scale is easy to interpret if you figure out what each one means…. You know, the 1st 100 encounters I had, I was still like, what do these mean? And you have to like really sit down and look at it. But once you kind of get it to where you’re like, “OK, I understand what’s going on here. (S4, Para. 129)

In the clinical grading system, after the grades are completed by the faculty member, the data is turned from a 1 to 3 scale into a 100-point scale. This happens automatically behind the scenes. While this information could be provided during the formal orientation, it is purposely hidden “so that it doesn’t introduce an observer bias in doing those gradings” (F1, Para. 181). The students, like the faculty, are unsure how the system works in the creation of the encounter and final grades. S5 is more global in her comment, saying, “I don’t totally understand how that works” (S5, Para. 9). S1, specifically tackles the “how” of the grade stating, “I don’t know like how it’s calculated or how you get met or below” (S1, Para. 101).

A1, in two separate comments corroborates the comments by the students that “there is a veil of secrecy over how grades are assigned” (F1, Para. 181). He stated, “I don’t know that there’s a lot of explanation or transparency to the faculty as a whole into how the numbers get converted” (A1, Para. 47) and “I don’t think that faculty often have a good idea of how the numbers they turn in for clinical encounter grades eventually turn into a course grade” (A1, Para. 48).

Theme 5: The clinical grading system continues to evolve and grow to meet the needs of all parties

The current clinical grading system has been in use for about 18 years. It has evolved since that time to meet the needs of the students, faculty and administration, and continues to evolve. Having a grading system that is dynamic is quite important to all three administrators. A3 reported that the notion of a dynamic system was part of their plan all along: “Our goal was to look at it [the clinical grading system] and upgrade it every three to five years (A3, Para. 21) and “the product that we have today has gone through at least three significant revisions” (A3, Para. 35). A1 went a step further than the other administrators, stating, “There’s always been a continual push to change it and I think what we have now, I don’t think there’s been any backward steps” (A1, Para. 3) since the new system was implemented in 2005.

As the current grading system went through a wholesale change many years ago, but has continued to evolve since that time, it is logical that future changes can be made towards the goal of further improvement. All parties involved offered suggestions that, in their opinions, would make the system not only more user friendly but also have a better impact on learning. The faculty suggestions centered around changing the quantitative grading scale to allow for greater discernment of student performance, not having to give feedback on all encounter types and a more formal orientation of the grading system in combination with education concerning best practices. The students focused on getting a higher level of feedback from the faculty and improving the language surrounding the grades. In these requests, the students are looking for ways to enhance opportunities for two aspects of the experiential learning cycle, reflective observations and abstract conceptualization.

Discussion

In looking at the three research questions posed, it would be easy to simply answer that the current clinical grading system is partially effective for grading and teaching, has a variable impact on shaping student learning and performance, and mostly meets the needs of the various stakeholders, but more detail is needed. For this process, the two sub-questions will be answered first, building them as a foundation to answer the main research questions. The first sub-question—how the clinical grading system used at SCO meets the Accreditation Council on Optometric Education (ACOE), students, faculty and administration needs—will be tackled first. The answer to this question is found in several of the themes.

Theme 3 outlines distinct ways the grading system is used by each group, administrators, faculty, and students, as well as the overlap among the three groups. Administration has broader uses for clinical grading, including assessment and documentation of student performance for legal reasons, but also to meet the needs of accreditation. Administration also use it to evaluate faculty performance. Faculty and students both use grades for feedback on performance, but on opposite ends of the equation. Faculty provide the feedback as a means of teaching and the students use it to gauge their performance and to gain new knowledge. The clinical grading process is a tool through which these undertakings occur, but it is by no means the only way faculty encourage critical thinking and reflection in students. Verbal feedback happens as well. The faculty spoke about providing both verbal and written feedback and how both types of feedback supported each other, especially in terms of students performing poorly. The students talked about having verbal interactions with the faculty in the moment and how that impacted their clinical learning. They also felt that faculty would often use the written feedback to support what was discussed verbally with them. Students and administration also use the grading program, which records patient demographics and diagnosis to track patient encounter data.

Theme 1 and Theme 4 show some of the weaknesses and limitations in the grading system that have an impact on its ability to meet needs and that cause frustrations for the three groups. The subjectivity and variability in faculty expectations are frustrating to the students. The level of orientation and guidance provided in using the system, as well as having a poor understanding of how the grading system works on both the front and back ends, is frustrating both to the students and faculty. The administrators confirm these frustrations as well and, in some cases, have attempted to address the issues.

Theme 5 brought up suggestions for further improvement of the system from all three groups. The desire for further change should not be interpreted as the system not working for the various groups, but rather that improvements could be made to suit the needs of all parties better. While there are inherent challenges in clinical grading globally and at SCO, the overall sentiment is that the grading system does in fact meet the needs of the various parties. There is room for process improvement and change, as a system that stays the same and is not examined through a needs-based lens becomes stale and obsolete.

The findings for the second research questions addressing how the current clinical grading at SCO meets needs of the students, faculty, administration and ACOE comes mostly from Theme 2. In Kolb’s Experiential Learning Theory,7 which was modified to include clinical grading and introduced previously, the four stages of the cycle to different aspects of the patient care experience and how clinical grading can be seen through the experiential learning lens were connected (Figure 2). If faculty were more aware of the cycle and the four stages, they could work with the students to encourage them in moving through the cycle successfully. The opportunity to provide written feedback is one tool that they have in this process. Administration should work with the students and faculty to enhance the opportunities for experiential learning in general and be aware of the cycle in creating time for the students to make their way through it. While the quantity of patient encounters is important on many fronts, the quality of the patient interactions and time with the faculty is just as crucial.

Even though the four stages are introduced as separate stages by Kolb, in reality, there is opportunity for constant movement within the cycle even while the patient is undergoing an eye examination. Reflection, one of the stages of Kolb’s7 experiential learning cycle, takes place through faculty feedback, both verbal and written. While the written feedback is part of the after-exam experience in the grading system, verbal feedback can and should occur as the student works with the patient. For example, when the student does a set of testing and presents the proposed diagnosis and treatment to the faculty member, there is an opportunity for discussion in which the faculty member can offer their opinion and guide the student. Another example is when the student’s testing of the patient is checked by the faculty member. Not only does this offer the student the opportunity to watch proper procedure, the discussion of the result and how to interpret it in light of other data can be illuminating for the student. If the student then has the opportunity to practice that skill in the moment, which many faculty members insist upon, they are tapping into the active experimentation aspect of the cycle.

The goal is for constructive criticism to be offered, through which the student can reflect on their performance and grow as clinicians. It is natural to give specific verbal feedback and instruction as the student is making their way through the patient eye examination process, allowing for immediate reflection. With written feedback, as per the students in the study, often lacks detail and is more general in nature. Feedback that is rich and specific in detail builds student confidence, while feedback that lacks detail or is perfunctory does not enable reflection and hampers the impact of the clinical grading system on student learning.

The timing of the grading also takes away from the potential learning aspect as well. Considering the experiential learning cycle again and looking at the feedback and grades as an opportunity for reflection, the sooner that opportunity is provided to the students the better. Grades completed weeks later are considered “out of sight, out of mind” by the students. For example, students typically see 20-30 patients weekly and, if a grade is not completed for 2 to 3 weeks, they have to attempt to recall the graded patient and what took place during that experience. Personally, most would find it quite challenging to do so in the midst of seeing more patients and studying for classes or boards. This delay essentially strips the student of the opportunity to reflect in a meaningful way.

Theme 1 also shows how the grading system can have an impact on learning. Faculty expectations of students generally change throughout the 2 years that the students are in the clinic. They change based on service, student experience and patient difficulty. These expectations are individualized to the specific faculty member and are not standardized clinic-wide or even service-wide. While there are general lists of expectations built into the clinical service syllabi by the chiefs of service, the course masters for the clinical courses, these are not typically distributed to the general faculty and are not service-specific. These are, in fact, available to the students via an online learning platform, but students are not required to read them or even acknowledge that they have looked at them. The students nonetheless find it challenging to focus on seeing patients and meet the ever-changing and variable faculty expectations. Compounding this issue, as seen in Theme 4 (Clinical grading is subjective and has challenges that inhibit effective use), is the lack of an adequate orientation and guidance in using the system and not understanding how it truly works behind the “veil of secrecy” to produce a grade.

While the clinical grading system was designed to provide quality feedback to the students, in some cases, that is either simply not happening, or it is indeed happening, but the feedback is not viewed. In other cases, it is occurring, and the impact is felt by the students and faculty. Suggestions made by the students, faculty and administration have the potential to increase the likelihood of the latter.

The answers to the two sub-questions lead to the following conclusion about the main research question: the clinical grading system at SCO meets the overall needs of the students, faculty and administration. This finding supports the idea that the administration had about the current clinical grading system that even though some minor changes were needed and have been made to finetune the system to meet better the needs of the students, faculty and administration, no significant changes were necessary or seem to be necessary currently. For the faculty, the system meets their general needs, but they have recommendations to remove barriers to (a) improve the quality of student feedback they provide, (b) enhance the potential for student reflection, (c) better understand how the system works, and (d) increase the consistency of how the faculty use the system. For students, it meets their needs, but they also made recommendations to remove barriers that hamper effective use of it in their learning process, limiting the opportunity for reflection. When looking at the clinical grading system through an experiential learning lens, the system is used to enhance student learning through reflection. However, the recommendations made are aimed at improving those opportunities for the students. Having an understanding of the experiential learning cycle, the opportunities for faculty teaching and student learning throughout the patient care experience, and how those opportunities can be enhanced is a crucial first step in clinical instruction at SCO.

Even though the ACOE guidelines for clinical grading allow for optometric programs such as SCO to craft its own system, based on the findings of this study, a general set of recommendations were formulated and are listed below. I hope these suggestions allow other programs to look at how they accomplish their clinical grading and encourage them to look at it through an experiential learning lens that emphasizes reflection. Feedback is vital to promote and enhance reflection and must be included within every health professional clinical grading system, including optometry.

Connecting the Findings to the Literature

Many of the themes and concepts that developed from this research can be connected to the literature concerning clinical grading. As discussed previously there is a dearth of literature related to optometric clinical grading, so the connections will be made to other health professions, such as nursing, dentistry and medicine.

Expectations

One of the main themes that emerged is related to faculty expectations, the development of these expectations, and their impact on student learning. In a review of the literature on evaluation, Orchard12 identified six factors that had the potential to be barriers to the evaluation of student nurses’ performance in the clinic. Three of the factors link to expectations in some manner:

- The relationship between the complexity of the student’s clinical performance expectations and the degree of subjectivity of appraisals

- Evaluator’s expectations of student’s professional socialization

- Personal values of the evaluators

If clinical supervisors are indeed the “experts” at helping students learn the knowledge needed before graduation and serve to help fill in the gap between school and practicing,13 it is important to also acknowledge, at the same time, that evaluators are human and not perfect. As Herbers et al.14 concluded: “Evaluators may vary considerably in their abilities to discern strengths and weaknesses in residents, and they may apply different standards when judging a resident’s performance”. While this quote references residents, it can be inferred that the same could be true about judging student performance. Herbers et al.4 went one step further, making the connection to expectations: “Evaluators may be positively or negatively influenced in their assessments of residents because of expectations or biases”. This quote aligns with the expectation issue in this study: faculty members, including those at SCO, each have different expectations for their students that developed over their careers based on their own clinical experiences and instincts.15 Faculty may emphasize different aspects of performance,16 disagree on treatment philosophy and differ on the choice of testing procedures. What one faculty member deems as an acceptable skill level or diagnostic ability, another may not.

Another issue identified in this study is that faculty expectations are dynamic. The faculty in the focus group offered that they altered their expectations based on the student year, the service in which they were seeing patients and the difficulty level of the patient. Kern and Mickelson17 identified different objectives or expectations for physical therapy students based on their year in the program. Mays185 found that the importance of various aspects of the examination process also changed with the faculty evaluating physical therapy students. While basic knowledge and the ability to write notes were vital as a junior, in the senior year, critical thinking and interpersonal relations were of greater importance. While the grading system at SCO does in fact alter the importance and weighting of various aspects of the grading categories between the third and fourth years; this is part of the “veil of secrecy” to which the faculty alluded. According to one of the administrators, not allowing the faculty to know that a change takes place in the weighting of categories, and what that change is, was done so as not to inject bias into the grading process. Consideration should be given to whether this policy should be revisited and allow both faculty and students a better understanding of the grading system’s inner workings.

The issue of expectations changing with patient difficulty was questioned in relation to the validity and reliability of assessing the performance in legal education by Grimes and Gibbons.19 Their question was regarding legal clinics and clients of varying type and difficulty, but the concept is the same with optometry. Patient difficulty links to the service in which the patient is examined as well. For example, a patient who has an eye tracking issue may be deemed as easy by faculty experienced in such conditions but considered challenging by faculty who are more experienced with retinal disease. Those faculty members typically work in different clinical services at SCO and, based on the focus group, have different expectations for the student based on the perceived difficulty of the patient and the service in which they were seen.

The influence of changing or unclear expectations voiced by the SCO students was also a concern in a study by Rafiee et al.20 of nursing students and instructors. One student stated the following, which could easily be heard coming out of the mouth of an optometry student in this study’s focus group.

Each instructor has his/her own special rule. Each instructor acts as she/he wishes. We don’t know what the nursing instructors want us to do. We don’t know what we are supposed to learn since the instructors score the students based on their speculations. (p.46)

Overall, unless graders are using the same standards in grading based on a specific set of parameters and are outlining those expectations to the students, there is bound to be confusion and variability in outcomes. This is an issue in many professional programs where clinical grading plays a part in the educational process. Many of the issues that emerged in this study related to expectations are of concern in other health professions as well.

Feedback

Even though there are connections between feedback and reflection, there is a lack of literature on the use of feedback in a formal grading situation. Taylor and Hamdy21 discussed adult medical education and the use of feedback in assessment. They indicate that “any assessment system will provide learners with an indication of where they are going wrong, and which areas they should focus on for clarification of their understanding”. The role of the educator, in their opinion, is to inspire reflection via written feedback. It is the written feedback aspect of the SCO grading system that I believe fulfills this requirement. The quantitative 1 to 3 scale in the SCO system simply gives a grade for that aspect of the clinical experience, but it is the qualitative feedback in the form of what the student did well and what they need to improve that has the potential to spur on student reflection and learning. The concept of clinical grading being used as a method of reflection toward the building of knowledge and clinical skills is lacking in the health profession literature and is absent in the optometric literature. Since good-quality feedback in clinical grading has the potential to lead to reflection, and reflection is part of the cycle of experiential learning,3 it is logical, in my opinion, to connect clinical grading with experiential learning. Viewing clinical grading through an experiential learning lens has the potential to produce a greater number of opportunities for student learning and growth while enabling the optometric program to fulfil its needs and those of its stakeholders.

One of the big themes that emerged in this research centered on faculty feedback having a positive and negative impact on the learning opportunity afforded through the clinical grading system. As Dennick22 concluded, “Reflection is fundamentally enhanced by feedback … Feedback can enable the learner to analyze their actions and understanding and to plan for future learning”. However, based on the student focus groups in this study, the lack of feedback or low-quality feedback can also have a negative impact. The students participating in the focus group indicated that lack of feedback, poor quality of feedback and poor timeliness of grades caused them to decrease how much they looked at their grades and even caused them to think less of certain faculty. If one of the goals of the clinical grading system is to provide feedback on which students can build confidence and learn from or reflect on what they did well or poorly, providing high-level, specific feedback with actionable points is crucial. The concept of the negative impact of the faculty feedback relates to Kolb’s3 theory of experiential learning—specifically the reflection part of the cycle. Students discussed the negative impact of the feedback they received and how it did not enhance their reflection but instead hampered it; this indicates that the reflection link in the experiential learning cycle broke due to the lack of or poor quality of the feedback. Several studies have examined the characteristics of the feedback made in medical clinical grading. Canavan et al.23 collected 970 surveys of clinical performance from 256 observers. In 210 surveys, comments were considered to be non-behavioral or global assessment and contained comments on the individual’s traits or attitudes (fantastic guy, great physician, etc.). Specific behaviors or instances of behaviors were commented upon in 102 surveys. Comments offering strategies for improvement were both general (33 surveys) and specific (26 surveys) in nature. Based on the findings, the authors stated, “Most feedback comments were positive, self-oriented, and lacked actionable information that would make them useful to learners”.23 The SCO students in the focus group highlighted the issues with feedback quality and how the poor feedback that they received led to them not using the system for personal growth. Like in study by Canavan et al,23 the students in this study complained of feedback that was general in nature, that lacked specific areas of weakness on which to focus or the potential for improvement, and that was repetitious in nature.

Pulito et al.24 reviewed evaluation forms from medical students’ clerkship rotations. Of the 331 forms analyzed, 115 contained no written comments. The remaining 216 forms contained 1,056 specific comments. The top comment-garnering category by far was professionalism/dependability (412 comments), followed by surgical/medical knowledge (88 comments) and communication skills with other health care professionals (78 comments). Not one comment was made concerning history taking and physical examination skills, ordering or lab tests, or surgical technical skills; these are actionable areas for growth in which specific feedback would prove most beneficial to student growth. Comments related to these three topics would likely have the greatest impact and opportunity to enhance reflection.

Lye et al.,25 in a study of pediatric medicine clerkships, collected 261 evaluations on 157 students. Thirty-four evaluations were eliminated due to lack of comments or a statement by the evaluator that they were “unable to judge the student’s performance” (129). Of the remaining 227 evaluations, 1,017 comments were analyzed. Comments categorized under learner and personal characteristics accounted for 519 (51%) of the total comments made, followed by overall clinic performance (95) and knowledge base (70). Only 311 (31%) comments were related to clinical performance and only 134 of those offered specific details.

The results of these two studies match those of Canavan et al.23: low-quality feedback is not productive and offers little opportunity for student reflection and growth. One interesting statistic from Lye et al.25 is the high number of evaluators who admitted that they were not able to judge the performance of the student. While the paper does not surmise why this might be, perhaps it is related to the fact that the evaluator is typically not with the student and the patient at all times. This was an issue found in the current study as a challenge related to clinical grading.

One topic that emerged from this study related to feedback was timeliness. The SCO students indicated that one of the barriers to getting and incorporating the feedback into their practices was that there was often a delay in when they would get that feedback. In some cases, students waited weeks for their grades to be completed. Timely feedback is listed by Romani and Krackov26 as one of their 12 imperatives for feedback. If provided too late for the student to make use of it, it potentially becomes less relevant.27 The student has already moved past that experience and has seen a multitude of other patients on which their attention is focused and for which feedback may have been timelier. The poor timeliness of the feedback has the ability to stop or to hinder the experiential learning cycle,28 which has an obvious impact on the learning opportunity.

As discussed by the students in this study, clinical grading has the ability to give them confidence and to build them up emotionally. Skinner et al.29 found that students’ confidence and interpersonal skills improved following experiential learning opportunities. By giving constructive criticism, the hope is that it will boost self-esteem and motivation. There is always the worry that negative criticism may detract from this goal, but this should not stop the faculty member from providing the needed feedback.30 In contrast to the concept of negative feedback having a negative impact on student confidence, Clynes31 found that negative feedback did not have a negative impact on the mentor/student relationship. Plakht et al.32 found that “students suggest that a good supervisor is someone who provides constructive criticism rather than allowing inaccurate practice to continue”. Clynes and Raftery33 reported that students exhibit maturity in their appreciation of the importance of receiving feedback and value the chance to focus on noted weaknesses to improve practice. This concept was echoed by the SCO students on several occasions. Several students acknowledged getting critical feedback, which might have stung at first, but they realized that the feedback was meant to help them recognize their weaknesses and offer the opportunity for growth.

According to Kohn,34 “The best evidence we have of whether we are succeeding as educators comes from observing behavior rather than test scores or grades.” Yorke,35 in discussing formative assessments, under which clinical grading would fall, asks two questions regarding effectiveness, one of which is whether the assessment influenced behavior. This topic was addressed briefly by several of the faculty members in this study. They indicated that they had indeed seen a shift in behavior as a result of the formative assessment, the clinical grades. I have seen these changes personally as well. This is an indication that at least some of the time, the students were indeed reading their grades, reflecting on what was written and making beneficial changes in their knowledge or skills.

Subjectivity and Challenges

The theme that encompassed subjectivity in grading and challenges that inhibit effective use contains subthemes that are evident in the literature. Subjectivity in clinical assessment or grading is not an issue limited to an optometric curriculum such as SCO’s. Even though the grading system was designed to be objective, it definitely struggles to be anything but subjective. Grading is based on values, experiences and expectations, which are personal in nature, as was discussed previously.36 In a qualitative study of 11 clinical nursing faculty by Amicucci,37 the term “gray” was specifically used by nine of the participants in their discussion of clinical grading. In this study, three of six faculty members and all three administrators used the term “subjective” to describe clinical grading.

Not only can the subjectivity affect the reliability and credibility in the assessment system, it also has the potential to impact learning negatively, as the students’ takeaway is that the system is arbitrary and they subsequently devalue it.38 The subjectivity or changing expectations was a notable frustration for the SCO students and played a part in their lack of use of the grading system as their training progressed.

Connected to subjectivity is the ability of the faculty to assess everything that takes place in the patient encounter. Pulito et al.24 asked medical student preceptors to rate, out of ten, how effectively they witness or infer from other performances and their ability to evaluate each of the 11 performance categories. Professionalism/dependability, knowledge and clinical reasoning/judgement were rated as observable. Categories such as basic clinical skills, history/physical examination skills and interpersonal skills with patients were considered usually not observable and the most likely to be difficult to evaluate and inferred from other performance. The issue with the faculty member not being in the room is a barrier to effective grading as per the SCO students. This impression is supported by Canavan et al.23 in a study of medical residents. It was specifically noted that 8.2% of surveys contained comments remarking limited or lack of exposure or hearsay in the grading process. While in a perfect world, the faculty/student ratio would be 1:1, with no division of attention, that is all but impossible. At SCO, the ratio is typically 1:4, which is fairly standard throughout clinical optometric practice. It is simply impossible, as shown by Pulito et al.,24 to observe every aspect of the examination process, leading to an even greater level of subjectivity injected into the clinical grading process.

Another challenge in clinical grading assessment that is by no means limited to SCO is that those individuals doing the grading hesitate to fail deserving students. Essentially, nobody wants to be the bad person. Duffy39 found that preceptors continued to pass students, allowing the student’s personal issues to cloud their judgement. Dudek et al.40 identified four major barriers to failing medical trainees (students or residents), including lack of documentation, lack of knowledge about what specifically to document, anticipating an appeal process, and lack of remediation options. Other reasons for not failing students include lack of staff training or inadequate support, negative consequences for those doing the grading including potential litigation, hostility and manipulation by students, staff shortages,41 and hesitation on the part of the preceptor to identify or to resolve the concerns early enough in the clinical rotation.42

Failing a student can be emotionally stressful and can conjure feelings of loneliness for the faculty member. This is especially true for novice or part-time faculty.43 On the positive side, in a survey of 390 nurse faculty, “nearly half the sample reported changes in their teaching practices following the deliberation to assign a failing grade”.44 The changes made were in areas related to communication, remediation, documentation and professional growth, among others. While failing a student can be a challenge, the opportunity to alter behavior and to grow from the experience is not limited to the student.

Not Understanding the Clinical Grading System

Not understanding the clinical grading system appeared in the data from all three groups. The student and faculty frustration focused on a lack of training and having to determine on their own what the different quantitative variables mean. The faculty also expressed an issue with the lack of guidance in grading and how to set their expectations. The administration corroborated the lack of training and guidance on a continual basis after the initial onboarding process of both students and faculty. In a study of nurse assessment, one theme that arose was a poor understanding of the assessment tool, despite a required training. This concern was voiced by both the students and faculty.45

McDonald,43 in a discussion of grading fairly and consistently, highlights the idea of discussing grading criteria with all graders in order to align perceptions of the grading system and bring consistency to perspectives. He suggests giving a few samples for grading, comparing the grades and then discussing the grading criteria. Clement and Raleigh47 reviewed 38 studies on nursing clinical assessment. They recommend regular training and peer review. Alpine et al.48 performed a before-and-after study with 58 nursing instructors. The participants watched videos of poor and good performance and graded performance on several factors. They then underwent a training and a discussion of the criteria and the videos that they previously graded. They were asked to reconsider their grades if they felt it was appropriate. About 50 to 55% of the participants changed their grades. Interestingly, a larger number changed their scores in the negative direction, lowering scores, both for the poor (53.5%) and good performance (37.4%). There was a four-times-greater chance of the good performance score going up in contrast (12.9% vs. 3.1%). The authors showed the immediate impact of training on grading outcomes, including reliability and validity. Proper orientation and continued training and guidance is a must in order for an assessment system to function effectively,47 especially as the faculty changes from year to year. Having routine training would also help reduce errors and grade inflation, a concern in the assessment process.49

Limitations

As there are more than 270 students in the third and fourth year in the SCO population, despite attempts to get a wide variety of input based on academic standing, I struggled to get participation in the focus groups, but I ultimately succeeded. The question of whether the opinions expressed by this group of students truly represents the greater number of students is a potential limitation. The same question exists regarding whether the group of faculty members selected represents the greater opinion and is also a potential limitation. Since the focus group contained a variety of faculty based on service and years at SCO, this is less of a concern. Another limitation related to the students in the focus group. The 4th-year students were all in the final semester of their final year and were quite close to graduation. The question as to their being comparable to a student earlier in their fourth year is a potential limitation. A potential limitation for all groups is comfort using Microsoft Teams, but since this study was conducted after the pandemic, during which individuals gained significant time using this meeting software, this limitation should be minimal.

Recommendations Based on the Study

One of the goals of this study was to make recommendations to the administration for potential changes to improve the functionality and impact on learning of the clinical grading system at SCO. Based on the three interviews and two focus groups, the following recommendations should be considered and are linked back to the results of the study themes and subthemes in Table 2:

- An enhanced orientation on the clinical grading system for all new faculty should be initiated to ensure a good understanding prior to grading students. This would include case examples based on the services in which the faculty would spend a significant amount of time, a detailed orientation on the history of the grading system, how it works, including category weighting changes between third and fourth year, and a better understanding of the grading expectations from an administrative viewpoint. The orientation period would also include a review of a new faculty member’s grades to catch and correct inconsistencies, as well as to provide guidance as new situations arise, throughout their first year of teaching.

- A regular discussion of the grading system by the faculty as a whole, including enhanced guidance from the administration, should be initiated and done yearly. This should include a better understanding of how the grading system works behind the scenes for the faculty and a series of examples to encourage discussion on grading expectations in order to reduce subjectivity and variability. This would allow faculty to be immediately aware of any changes made to the grading system.

- Eliminate the requirement for feedback on certain types of follow-up eye examinations like simple dilated fundus examinations, spherical contact lens checks and basic examinations, reducing the number of perfunctory comments like “good job” and “well done.” This will allow students to focus more on feedback that enhance learning and reflection and not have to sift through to find such comments. This will also allow faculty more time to spend making feedback on encounters to enhance learning and student reflection. Another consideration would be to add the option of “NA” for the quantitative categories since one of the challenges is that the faculty are not present for the entire patient encounter.

- An enhanced orientation on clinical grading for all students, including case examples of what the various levels (1-3) equate to clinically, how the grading system works, and how they can use it for learning purposes, should be initiated to ensure a good understanding prior to starting patient care in the third year, as well as a follow-up to ensure an appropriate level of understanding as they progress throughout their careers.

- Require all faculty members to write and deliver to students, prior to working with them, detailed expectations for patient care. If the faculty member’s expectations vary based on the student’s experience (third or fourth year) or on the service in which the care is delivered, they should be prepared with different versions of their student expectations.

- Written faculty feedback to students should corroborate and support their verbal feedback and be as specific and detailed as possible. Faculty should attempt to include resources such as articles, online videos and websites to encourage students toward life-long learning and enhance the ability for reflection.

- Clinical course syllabi expectations should be updated and made as service-specific as possible. The syllabi must be shared with both the faculty and students so that they can coordinate service expectations with their own. The service expectations should be presented during student orientations.

- Add opportunities for the students to ask questions of the faculty within the grading system. As indicated by the students, this would enhance their ability to use the system for learning. This would be a key step to take to help the students in the reflection phase of the experiential learning cycle as the questions are essentially a first step as they have already reflected about the patient experience and would already be asking for more explanation and resources.

- Require all grades to be completed within a short time period (within 3 business days) to enable students the maximum level of reflection.

- Require all grades to be acknowledged in some manner by the students, especially grades marked as “below expected.” Ensuring that students are seeing their grades, good and bad, increases the likelihood that they see the feedback and consciously or subconsciously have the opportunity to reflect on what written feedback from faculty.

- Increase the importance of clinical grading in the faculty evaluation with an emphasis on grading as teaching. If the mission of the college is to educate students, and clinical grading is a means of doing so, then not completing that task should be punitive to the faculty. While it already is on some level, perhaps that is not enough and the importance of properly completing clinical grades should weigh heavier in the annual review process.

Table 2. Summary Recommendations Linked with Themes and Subthemes Developed in this Study. Click to enlarge