PEER REVIEWED

Comparing self, peer, and faculty assessments within an interprofessional hybrid course

Jasmine Wong Yumori OD, FAAO, Dipl AAO, FNAP, Kierstyn Napier-Dovorany OD, FAAO, JaeJin An, PhD

Abstract

Providing effective and timely feedback to students on interprofessional communication skills, particularly within courses with large enrollment, can be difficult. This study compared faculty to self- and peer assessments of consultations/referrals written by second year optometry students enrolled in an interprofessional hybrid course. Results demonstrated that the highest scores were generally seen from self-assessments, followed by peer, and lastly faculty assessments. Since correlations between faculty and both self- and peer assessments were weak, more investigation is needed to evaluate how and when to best use self and peer assessment.

Keywords

Introduction

Effective interprofessional communication skills are critical in achieving efficient and safe care of patients.1 Written consultation and referral requests are the most common methods by which healthcare providers communicate crucial patient information.2 Poor communication can lead to increased healthcare costs and delays or even errors in diagnosis.3 Thus, it is essential for health professional students, including optometry students, to practice and receive feedback on writing consultations/referrals prior to direct involvement in the clinical care of patients. As stated by the Association of Schools and Colleges of Optometry, schools and colleges of optometry shall ensure that before graduation each student will have demonstrated “effective communication skills, both oral and written, as appropriate for maximizing successful patient care outcomes”.4

Providing effective and timely feedback from faculty regarding communication skills can be difficult, particularly in courses with large student enrollment. Self- and peer assessments have been shown to serve as learning tools to stimulate student participation, allow students to gain insight into their own performance, promote self-directed lifelong learning,5-8 build reflection and self-awareness skills,9 and provide constructive feedback.10 The ability to understand ones’ own strengths and weaknesses through the process of self-assessment is an important component in health professions training.11,12

While self- and peer assessments have been shown to be effective learning tools, there are mixed impressions regarding their use as an assessment tool.6,7,13-17 The goal of this study is to assess for similarities and differences between optometry students’ self-assessments of written interprofessional communication skills compared to assessments from peers and faculty to thus evaluate the effectiveness of self- and peer assessment as a reliable assessment tool in this context.

Methods

Description of the Course

Team Training in Healthcare I (IPE 6000) is a course at Western University of Health Sciences. During the Fall 2015 delivery of IPE 6000, the course had over 850 students enrolled across eight health professions programs (dental medicine, graduate nursing, optometry, osteopathic medicine, pharmacy, physical therapy, podiatric medicine and veterinary medicine) on two campuses (Pomona, California, and Lebanon, Oregon) and was mainly delivered online. Students enrolled in IPE 6000 completed written consultations/referrals based on a case created by faculty from students’ respective professions. The process and rubric were introduced to students through a video briefing session.

Self- and Peer Assessments

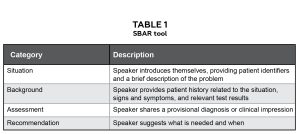

Table 1: SBAR tool. Click to enlarge

After students uploaded their consultations/referrals onto a Learning Management System (Blackboard), they were asked to provide self-assessments and anonymous peer assessments from two health professions students enrolled in the course using a generalized rubric focused on interprofessional communication skills. Given the randomization process, peer assessments were completed by students from any of the enrolled eight health professions programs. Thus, the peer feedback could have been done by an optometry or a non-optometry student. The design of the rubric was based on the SBAR (Situation-Background-Assessment-Recommendation) tool from the Institute for Healthcare Improvement (Table 1)18 and the core competencies from the Interprofessional Education Collaborative.19 Consultations/referrals written by optometry students only were evaluated in this study.

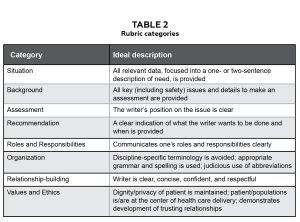

Table 2: Rubric categories. Click to enlarge

The specific rubric categories were Situation, Background, Assessment, Recommendation, Roles and Responsibilities, Organization, Relationship-building and Values and Ethics (Table 2). Ideal descriptions for each category were provided. For each rubric category, students had to rate performance as Ineffective, Somewhat effective or Effective. Students were provided with the opportunity to provide qualitative feedback for each category, asked to provide an overall rating and encouraged to provide overall qualitative feedback.

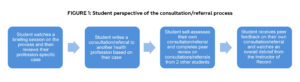

Students subsequently received peer feedback on their own consultation/referral and a video debrief with overall feedback from the instructor of record (Yumori) was provided after the completion of each case. Figure 1 summarizes the student perspective of the consultation/referral process.

Figure 1: Student perspective of the consultation/referral process. Click to enlarge

Faculty Assessment

Two college of optometry faculty members (Yumori and Napier-Dovorany) completed faculty assessments on consultations/referrals written by second-year optometry students enrolled in the Fall 2015 delivery of IPE 6000. To reduce inter-rater variability, the faculty members separately evaluated three consultations/referrals using the same rubric as used by students (Figure 1) and then discussed scores. After this step, each faculty member then independently evaluated 29 consultations/referrals using the same rubric. Consultations/referrals were de-identified prior to the faculty assessment process.

Statistical Analysis

With identifying information removed, a total of 58 consultations/referrals written by optometry students were included for this study. Ten consultations were excluded from analysis due to missing self- or peer assessments, and 48 consultations/referrals were used for analysis. For each consultation/referral, optometry students’ self-assessment was given a numeric composite score, with one point for Ineffective, two points for Somewhat effective and three points for Effective across each of the eight rubric categories (Situation, Background, Assessment, Recommendation, Roles and Responsibilities, Organization, Relationship-building and Values and Ethics). This process was used to calculate the numeric composite score from each peer (two total) and from the faculty assessment. The Peer Average was calculated by averaging the numeric composite score from both peer assessments. Descriptive statistics and intra-class correlation coefficient were applied comparing scores between Self and Peer Averages, Peers (Peer 1 vs Peer 2), Self and Faculty, and Peer Average and Faculty. Scores were also categorized (23-24, 21-22, 19-20, and 18 or lower) and Cohen’s kappa statistics and 95% confidence intervals were calculated to assess for agreement between Self and Peer Average, Peer 1 and Peer 2, Self and Faculty, and Peer Average and Faculty. This study was approved by to the Institutional Review Board at Western University of Health Sciences.

Results

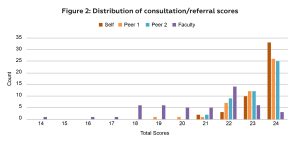

Overall, mean (SD) composite scores were relatively high (23.5 (0.8) for Self, 23.2 (0.7) for Peer Average, and 20.7 (2.2) for Faculty), with Self and Peers assessments tending to show higher scores compared to Faculty (Figure 2).

Figure 2: Distribution of consultation/referral scores. Click to enlarge

Intra-class correlation coefficients using composite scores were “highest” between Peers (Peer 1 and Peer 2) at 0.13, followed by Self and Peer Average at 0.12, Peer Average and Faculty at 0.06, and lowest for Self and Faculty scores at -0.01.

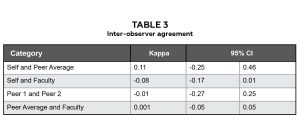

Table 3: Inter-observer agreement. Click to enlarge

When composite scores were categorized (23-24, 21-22, 19-20, and 18 or lower), Cohen’s kappa statistics and 95% confidence intervals were 0.11 (-0.25-0.46), -0.01 (-0.27-0.25), and 0.001 (-0.05-0.05) for Self and Peer Average, Peer 1 and Peer 2, and Peer Average and Faculty, respectively (Table 3).

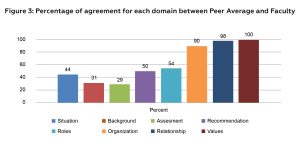

Agreement differences between the Peer Average and Faculty scores varied based on rubric categories (Figure 3). Higher agreement was seen with the Values and Ethics (100%), Relationship-building (98%), and Organization (91%) categories. Lower levels of agreement were seen between the Peer Average and Faculty scores on the Assessment (30%), Background (35%), Situation (43%), Recommendation (48%), and Roles and Responsibilities (54%) categories.

Figure 3: Percentage of agreement for each domain between Peer Average and Faculty. Click to enlarge

Discussion

In this study, which compared self-, peer and optometry faculty assessments of consultations/referrals written by second-year optometry students within an interprofessional education course, overall, scores were relatively high, with generally higher scores from self-assessments, followed by peer assessments and lastly assessments from faculty. The trend of students tending to self-rate their communication abilities high is common, as seen in by Chu and Woo, where 96% of optometry students self-rated “use of written and oral communication that is understandable to patients, families and other healthcare team members” as an area of strength.20 Papinczak, Young, Groves and Haynes highlight that students with greater self-efficacy tend to score their performance higher and found moderate correlations between peer assessments and tutor.9 This is similar to our statistical agreement, with the “highest” intra-class correlation coefficient between peers and lowest between self and faculty. However, given that stratification was done by category with good agreement in organization, Relationship-building and Values and Ethics and lower agreement in the SBAR dimensions, there is poor overall agreement. This difference in agreement is likely due to the shared perspectives peers encompass compared to the differences in level of development and experience between students and faculty. Over-estimation common in self-grading may also have been a contributing factor.21

Weak agreement between self-, peer and faculty assessments may also be partly based on differences across rubric categories, with more agreement seen between peers and faculty in more subjective categories (Values and Ethics, Relationship-building and Organization). Such categories may thus be appropriate to assign to peers from an assessment perspective, allowing faculty to dedicate more time in providing feedback to other sections that perhaps require more experience. While self- and peer assessments may not align closely with faculty evaluations and self-assessment accuracy can change over time,22,23 these strategies offer benefits that should not be overlooked. Self-grading has been shown to promote self-regulation of learning,24 improve metacognition,21 and support lifelong learning,25 all of which are crucial skills for healthcare professionals, including optometrists. Peer assessment helps students develop critical evaluation skills and exposes them to diverse perspectives, which is essential for effective interprofessional communication. Both self- and peer assessments allow students to be more active and motivated throughout the learning process,26 especially in lower stakes learning opportunities. Furthermore, value may lie in the learning process itself rather than in producing scores that match faculty grading. These methods can serve as powerful complementary tools to faculty assessment, particularly in large courses where timely, individualized feedback from instructors may be challenging to provide.

When composite scores were categorized little to no agreement was seen, with the no agreement seen between self and faculty, between peers and between the peer average and faculty assessments; slight agreement was seen between the peer average and self-assessments.27 We believe the weak to no agreement may be due in part to ceiling effects. Trudel and colleagues shared two definitions of ceiling effects:28 the first is “an intervention having limited effect because the population is already at, or near, a pinnacle point,”29 which may fit with our study that involved optometry students, who must be highly qualified academically in order to enter an optometry program.30

The second definition Trudel and colleagues reference regarding ceiling effects,28 those caused by “the limitation of an assessment to capture the extent and variance of accomplishment because essentially the assessment is too simplistic,”29 is also appropriate. It is possible that the profession-specific case the second-year optometry students based their consultation/referrals on was too simple and/or the rubric was not specific or sensitive enough to capture meaningful differences. Selecting a more complex case and using more specific assessment tools such as checklists, which provide more structured guidance to students, may correct this measurement problem that limits our ability to accurately assess.31,32 Other course-specific characteristics, such as providing more extensive training session23 and more detailed rubric criteria,33 may additionally be influential in students’ accuracy in self- and peer scoring, with faculty scores as comparison.

Future directions involve enhancing the rubric design by incorporating detailed descriptors that clearly define the criteria for each performance level: Ineffective, Somewhat effective and Effective. Capturing student-specific characteristics such as overall academic performance, gender and self-efficacy and competency to see whether these affect self-, peer and faculty assessments of optometry students’ interprofessional communication skills may also be helpful. Additional future directions include assessing optometry students’ performance as they gain more clinical experience, comparing assessments from and of optometry students across different institutions, and to expand this work beyond the profession of optometry to allow comparisons between different professions.

It is important to note that based on the course and Learning Management System design, it was not possible for us to control for which health professional student evaluated which consultation/referral. It would be exciting to see whether there is a difference in assessments from optometry versus non-optometry students and furthermore between optometry faculty members (as seen in this study) compared to faculty from other health professions. Other areas to explore include featuring other types of clinical cases to see whether more complex cases demonstrate less influence from ceiling effects and any differences between written and verbal interprofessional communication.

Moving forward, educators should consider implementing a balanced approach that combines faculty, self- and peer assessments. This multi-faceted strategy can provide students with a more comprehensive learning experience, fostering critical skills such as self-reflection, peer evaluation and interprofessional communication. However, it is crucial to provide clear grading criteria and training for students to maximize the effectiveness of self- and peer assessments.

Conclusion

This study highlights the challenges of relying solely on self- and peer assessments in interprofessional communication skills training, especially in large courses. The weak correlations between faculty, self- and peer evaluations suggest students may lack the objectivity or experience to accurately assess their own and their peers’ performance in these complex skills.

Instructors need to re-evaluate how we use self- and peer assessments, perhaps shifting towards incorporating more structured assessment rubrics, providing explicit training on evaluation criteria, and increasing direct faculty feedback, even in large enrollment courses. Thoughts about breaking down communication skills into smaller, more observable behaviors that are easier for students to evaluate may be appropriate.

This experience furthermore underscores the need to design activities that specifically encourage students to calibrate their self-perception against expert feedback. If self- and peer assessment are maintained as valuable components of an IPE course, students can be advised to reflect on expert observations and feedback so that they may be more calibrated in future iterations of assessment.

Ultimately, if there is a desire for students to develop effective interprofessional communication skills, assessment methods need to be valid, reliable and provide meaningful feedback that drives improvement. This will allow students to become active, self-directed lifelong learners, build reflection and self-awareness skills and provide constructive feedback, all of which are crucial skills to develop in optometry and all health professional students.

References

- Keller KB, Eggenberger TL, Belkowitz J, Sarsekeyeva M, Zito AR. Implementing successful interprofessional communication opportunities in health care education: a qualitative analysis. Int J Med Educ. 2013 Dec 22;4:253-9. DOI: 10.5116/ijme.5290.bca6

- François J. Tool to assess the quality of consultation and referral request letters in family medicine. Can Fam Physician. 2011 May(5);57:574-5.

- Epstein RM. Communication between primary care physicians and consultants. Arch Fam Med. 1995 May;4(5):403-9. DOI: 10.1001/archfami.4.5.403

- Smythe JL, Daum KM. Attributes of Students Graduating from Schools and Colleges of Optometry: A 2011 Report from the Association of Schools and Colleges of Optometry [Internet]. Rockville, MD: Association of Schools and Colleges of Optometry; c2011 [cited 2019 October 2]. Available from: https://optometriceducation.org/files/2011_AttributesReport.pdf

- Speyer R, Pilz W, Van Der Kruis J, Brunings JW. Reliability and validity of student peer assessment in medical education: a systematic review. Med Teach. 2011;33(11):e572-85. DOI: 10.3109/0142159X.2011.610835

- Wagner ML, Suh DC, Cruz S. Peer- and self-grading compared to faculty grading. Am J Pharm Educ. 2011 Sep 10;75(7):130. DOI: 10.5688/ajpe757130

- Tornwall J. Peer assessment practices in nurse education: An integrative review. Nurse Educ Today. 2018 Sep 21;71:266-75. DOI: 10.1016/j.nedt.2018.09.017

- Dolmans D, Schmidt H. The advantages of problem-based curricula. Postgrad Med J. 1996 Sep;72(851):535-8. DOI: 10.1136/pgmj.72.851.535

- Papinczak T, Young L, Groves M, Haynes M. An analysis of peer, self, and tutor assessment in problem-based learning tutorials. Med Teach. 2007 Jun;29(5):e122-32. DOI: 10.1080/01421590701294323

- Anderson PA. Giving Feedback on Clinical Skills: Are We Starving Our Young? J Grad Med Educ. 2012 Jun;4(2):154-8. DOI: 10.4300/JGME-D-11-000295.1

- Epstein RM. Assessment in medical education. N Engl J Med. 2007 Jan 25;356(4):387-96. DOI: 10.1056/NEJMra054784

- Batalden P, Leach D, Swing S, Dreyfus H, Dreyfus S. General competencies and accreditation in graduate medical education. Health Aff (Millwood). 2002 Sep-Oct;21(5):103-11. DOI: 10.1377/hlthaff.21.5.103

- Hanrahan S, Isaacs G. Assessing Self- and Peer-Assessment: The Students’ Views. Higher Education Research & Development. 2001 May;20(1):53-70.

- Magin D. A Novel Technique for Comparing the Reliability of Multiple Peer Assessments with that of Single Teacher Assessments of Group Process Work. Assessment & Evaluation in Higher Learning. 2001 Jun;26:139-52. DOI:1080/02602930020018971

- Rudy DW, Fejfar MC, Griffith CH 3rd, Wilson JF. Self- and Peer Assessment in a First-Year Communication and Interviewing Course. Eval Health Prof. 2001 Dec;24(4):436-45. DOI: 10.1177/016327870102400405

- Fitzgerald K, Vaughan B. Learning through multiple lenses: analysis of self, peer, near-peer and faculty assessment of a clinical history taking task in Australia. J Educ Eval Health Prof. 2018 Sep 18;15:22. DOI: 10.3352/jeehp.2018.15.22

- Tai JH, Canny BJ, Haines TP, Molloy EK. The role of peer-assisted learning in building evaluative judgement: opportunities in clinical medical education. Adv Health Sci Educ Theory Pract. 2015 Dec 12;21(3):659-76. DOI: 10.1007/s10459-015-9659-0

- Institute for Healthcare Improvement T. SBAR Tool: Situation-Background-Assessment-Recommendation [Internet]. Cambridge, MA: Institute for Healthcare Improvement; [cited 2019 July 12]. Available from: http://www.ihi.org/resources/Pages/Tools/SBARToolkit.aspx.

- Interprofessional Education Collaborative Expert Panel. Core competencies for interprofessional collaborative practice: Report of an expert panel. Washington, DC: Interprofessional Education Collaborative; 2011.

- Chu, RH, Woo S. Student Perceptions of Attaining the Association of Schools and Colleges of Optometry Graduate Attributes. Optometric Education. 2021 Fall;47(1).

- Jackson MA, Tran A, Wenderoth MP, Doherty JH. Peer vs. Self-Grading of Practice Exams: Which Is Better? CBE Life Sci Educ. 2018 Sep 1;17(3):es44. DOI: 10.1187/cbe.18-04-0052.

- Fitzgerald JT, White CB, Gruppen LD. A longitudinal study of self-assessment accuracy. Med Educ. 2003 Jul;37(7):645-9. DOI: 10.1046/j.1365-2923.2003.01567.x

- Blanch-Hartigan D. Medical students’ self-assessment of performance: results from three meta-analyses. Patient Educ Couns. 2011 Aug 14;84(1):3-9. DOI: 10.1016/j.pec.2010.06.037

- Eccles JS, Wigfield A. Motivational beliefs, values, and goals. Annu Rev Psychol. 2002;53:109-32. DOI: 10.1146/annurev.psych.53.100901.135153

- Sambell K, McDowell L, Brown S. But is it far?: An exploratory study of student perceptions of the consequential validity of assessment. Studies in Educational Evaluation. 1997;4:349-71.

- Edwards N. Students self-grading in social statistics. College Teaching. 2007;55(2):72-6. DOI:3200/CTCH.55.2.72-76

- McHugh ML. Interrater reliability: the kappa statistic. Biochem Med (Zagreb). 2012;22(3):276-82.

- Trudel MC, Marsan J, Paré G, et al. Ceiling effect in EMR system assimilation: a multiple case study in primary care family practices. BMC Med Inform Decis Mak. 2017 Apr 20;17(1):46. DOI: 10.1186/s12911-017-0445-1

- Judson E. Learning about bones at a science museum: examining the alternate hypotheses of ceiling effect and prior knowledge. Instr Sci. 2011 Nov;40(6):957-73. DOI: 10.1007/s11251-011-9201-6

- Associations of Schools and Colleges of Optometry. Annual Student Data Report [Internet]. Rockville, MD: c2018 [cited 2019 September 9]. Available from: https://optometriceducation.org/wp-content/uploads/2018/05/ASCO-Student-Data-Report-2017-18.pdf

- Hetstroni A. Ceiling effect in cultivation: general tv viewing, genre-specific viewing, and estimates of health concerns. Journal of Media Psychology: Theories, Methods, and Applications. 2014 Mar;26(1):10-8. DOI: 10.1027/1864-1105/a000099

- Vita S, Coplin H, Feiereisel KB, Garten S, Mechaber AJ, Estrada C. Decreasing the ceiling effect in assessing meeting quality at an academic professional meeting. Teach Learn Med. 2013;25(1):47-54. DOI: 10.1080/10401334.2012.741543

- Ward M, Gruppen L, Regehr G. Measuring self-assessment: current state of the art. Adv Health Sci Educ Theory Pract. 2002;7(1):63-80. DOI: 10.1023/a:1014585522084