PEER REVIEWED

Development and Validation of an Analytic Rubric for Assessing Retinal OCT Skills in Optometry and Ophthalmology Training

Sethumathi Gouragari, BS(Optom), MBA, Sumasri Kallakuri, M(Optom), Teresa SL Tee, PhD, Snigdha Snigdha, BS(Optom), MBA, Avinash Pathengay, DO, FRCS, Ruby Kala Prakasam, MSc, PhD

Abstract

Purpose: The study aims to develop and validate an analytic rubric for assessing the skills of optometry and ophthalmology trainees in performing and interpreting retinal optical coherence tomography (OCT), focusing on five key competencies: clinical etiquette, pre-procedure, procedure, accuracy of the procedure and interpretation of results.

Methods: During the development phase, the authors identified the fundamental technical skills and behavioural descriptors associated with retinal OCT procedures. A trainees’ expected outcomes were defined, and specific tasks were categorized into five domains, each with four levels of competence. In the validation phase, the authors created 11 questions, one for face validation and 10 for content validation. Invitations were extended to internal and external participants and ophthalmology and optometry experts through email. Participants were provided with the OCT rubric and validation questions. The validation questions were designed to assess the appropriateness and applicability of the tool and its components, including missing information, iteration or deletion of items, scoring distribution and applicability of the tool.

Results: Ten internal and 11 external validators participated in the study, and over 86% responded positively to the survey questions, with 11% offering additional validation-related comments. Approximately 12% of validators responded negatively, with 5% providing validation-related comments. The authors carefully analysed the feedback and incorporated changes that were agreed on unanimously. The validators proposed changes like acquiring consent before performing a test, effective communication with patients, information regarding protocol selection and potential artefacts and distribution of scores between domains.

Conclusion: This rubric for analytics-based assessment of retinal OCT skills can be a valuable educational tool for evaluating trainees’ competence in performing and interpreting retinal OCT examinations.

Key Words: optical coherence tomography, skills assessment, analytic rubric, clinical etiquette, accuracy, retinal OCT skills, ophthalmology training, optometry training

Background

Globally, 2.2 billion individuals have vision impairment or blindness, and over one billion of them are caused by unaddressed preventable ocular conditions.1 The key to resolving this challenge is making eyecare services and timely interventions accessible. evidence-based medical practices suggest that early diagnosis and treatment methods are pivotal.2 This strengthens treatment outcomes and restores or preserves visual function in complex ocular diseases, such as age-related macular degeneration and diabetic retinopathy. These retinal conditions, left untreated, can lead to irreversible damage and vision loss.

Continuous innovations in ocular imaging technology have predominantly focused on refining the technical aspects of clinical imaging to address critical concerns. These advancements have led to the development of non-invasive, high-speed and high-quality images that enable precise and early diagnosis of ocular ailments. Optical coherence tomography (OCT)3,4,5 stands at the forefront of ocular imaging, functioning as a valuable biomarker for the early detection of retinal diseases. The evolution of OCT, transitioning from Time-Domain to Spectral-Domain to Swept-source,6,7 has empowered the acquisition of cross-sectional B-scan images of both retinal and anterior segment structures.

This has significantly elevated the axial resolution and imaging speed, surpassing the threshold of 25,000 A-scans per second. However, this surge in technical advancement necessitates a parallel investment in highly skilled eyecare professionals.8 Beyond performing OCT procedures, clinician optometrists and ophthalmologists must demonstrate proficiency in image interpretation, clinical reasoning and interconnecting diagnostic outcomes with the patient’s holistic clinical presentations to prescribe appropriate management protocols. While the curricula of undergraduate and postgraduate optometry/ophthalmology training encompass the evaluation of diagnostic skills, there is an absence of robust rubric-based evaluation methods to score their competency levels.

A rubric is a widely used pivotal scoring tool in medical education.9-13 It presents a structured, objective mechanism without any subjective influences, ensuring an equitable evaluation of a trainee’s performance. however, to our knowledge, a rubric-based assessment tool specific to retinal OCT has not been reported in the literature. therefore, in this study, we have developed and validated an analytical rubric to evaluate trainee’s proficiency in performing and interpreting retinal OCTs.

Materials and Methods

The present study was reviewed by the scientific committee and granted initiated at LV Prasad Eye Institute, India, and received an exemption from the Institutional Review Board (IRB), as it does not involve experimental human or animal research. The study adhered to tenets of the Declaration of Helsinki, and informed consent for participation in the study has been obtained.

The study adopted a modified Dreyfus scale14 with the following stages of competence: novice, beginner, advanced beginner and competent, excluding the proficient and expert stages. This modification was made to align with our objective of assessing ophthalmology and optometry trainees at the basic and entry levels. This study involved two phases: OCT rubric development and validation (Figure 1). It was initially drafted by the first author and subsequently refined in collaboration with co-authors before being presented to internal and external validators.

Figure 1. Development and validation process. Click to enlarge

In the validation phase, the authors engaged internal and external experts in ophthalmology and optometry with a specialization in retinal diagnostics. These validators had professional experience spanning 5 to over 10 years in clinical practice, academic teaching and student training. Invitations were extended via email, offering an overview of the study’s objectives and encouraging voluntary participation. The respondents willing to participate were emailed a modified rubric assessment with face and content validation questions (Figure 1). Twenty-one validators, comprising 10 internal (four ophthalmologists and six clinical optometrists) and 11 external validators from India, Singapore, the United Kingdom, Australia, Saudi Arabia and the United States of America, who practice ophthalmology (n=2) and optometry (n=9) were included in the study.

The OCT rubric comprised five domains (Table 1), clinical etiquette, pre-procedure, procedure, accuracy of procedure and interpretation of results, with four grading categories of novice, beginner, advanced beginner and competent. Uniform weightage was assigned for the tasks or items within the domains. However, the total weightage of each domain varied, factoring in the relative significance of technical proficiencies encapsulated within each individual domain.

Table 1. Click to enlarge

The study employed a set of eleven questions to thoroughly assess the dimensions of the rubric, including content, construct and scoring criteria as outlined in the literature15 (Figure 1). This assortment of questions was designed to facilitate face (n=1) and content validations (n=10), encompassing a comprehensive evaluation of the rubric’s facets. The sequential process of face and content validations is shown in Figure 1.

Results

The validation process involved the participation of both internal (n=10, 47.6%) and external (n=11, 42.4%) validators. Among them, 23.8% (n=5) were ophthalmologists and 76.2% (n=16) were optometrists.

Face Validation

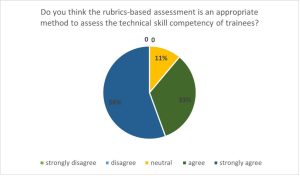

In face validation, 89% of the participants either strongly agreed to or agreed to rubric-based assessment as an appropriate method to evaluate a trainee’s skill competency (Figure 2).

Figure 2. Click to enlarge

Content Validation

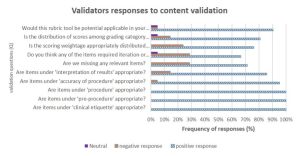

Type of responses from validators: The feedback provided by the internal and external validators was systematically collected and examined by the authors. The validators’ responses varied between ‘agreeing to’ or ‘not agreeing to’ for the validation questions and ‘providing’ or ‘not providing’ a comment. Therefore, the authors categorized the type of response into three categories (positive, negative and neutral), as shown in Figure 3. Most validators responded positively to the validation questions (mean =18.2; 86%), of which 13% provided comments. Over 12% (mean =2.5) of validators responded negatively, of which 44% provided comments.

Figure 3. Validators’ responses to content validation questions.Click to enlarge

Validator comments for domain-specific content: The authors discussed and deliberated on the comments proposing modifications. Only the comments that garnered unanimous agreement among the authors were integrated into the rubric.

Clinical Etiquette

The validators found it difficult to differentiate between the third and fourth points/tasks (P3 and P4) in the clinical etiquette domain of the rubric. They suggested using simple language and stressed the importance of obtaining patient consent before conducting the OCT procedure. These comments were accepted by the authors, and the rubric was modified accordingly. For example, pre-iteration P3: communicates to patients professionally; P4: responds to the patient’s queries appropriately; post-iteration P3: maintains professionalism with patient verbally and non-verbally; P4: responds to the patient’s queries relating to the test appropriately and ensures the patient’s willingness to undergo the test (Table 1, domain: Clinical etiquette, steps 3 and 4).

Pre-Procedure

The Validators recommended utilizing suitable language when communicating with the patient to describe the test procedure in simple terms to enhance comprehension. The authors also concurred that simplified language could improve patient compliance during the examination. Moreover, the authors were suggested to inform the patients about the potential requirement for pupil dilation and the importance of aligning the patients’ canthus with the canthal mark on the instrument.

Procedure

In response to the validators’ feedback, we have integrated appropriate scan selection protocols into the rubric (Table 1, domain: Procedure, step 2). Another significant observation from validators emphasized the importance of considering communication dynamics between students and patients during the procedure explanation. Accordingly, these aspects have been integrated into the rubric, delineated within the framework of clinical etiquette and pre-procedure (Table 1, steps 3 and 4).

Accuracy of Procedure

in response to the feedback requesting a concise delineation of the distinct artifact types along with examples, we have revised the rubric to include the patient-related, operator-related and device-specific artifacts (Table 1, domain: Accuracy of procedure, steps two, three and four)

Interpretation

In a routine diagnostic training practice, trainees are educated in diverse analysis protocols, such as single line scan, radial scan, macular volumetric analysis and OCT angiography analysis, where appropriate, based on the specific ocular disease conditions and diagnostic test requirements. This aspect of the trainees’ ability to select an appropriate scanning protocol was suggested to the rubric as an evaluating pointer. The feedback concerning this aspect was positive, and the new element (Table 1, domain: Interpretation, step 1) was added in response.

Validators’ Comments on the Rubric Construct and Scoring Criteria

A validator raised a query concerning the potential omissions of pertinent elements and recommended the inclusion of the limitations associated with normative databases and their applicability in patient outcomes.

A validator also commented on whether any aspect necessitated refinement. They proposed including a time limit to assess the precision of the procedure. The authors accepted this recommendation, integrating it as a novel item within the rubric.

Feedback from validators concerning the allocation of scores and weighting across different domains was diverse. Most validators (n,16; 76%) found the current distribution of weighting appropriate among procedure and accuracy of the procedure domains are given higher scoring (15 points) compared to other domains (10 points), including clinical etiquette, pre-procedure and interpretation. While one validator recommended assigning greater weightage to interpretation comparison to the accuracy of the procedure domain, others suggested an equal distribution across the domains. This current allocation aligns with the rubric’s primary aim of assessing optometry/ophthalmology trainees, prioritizing procedural accuracy as critical to their development. Interpretation is weighted slightly lower to emphasize mastering foundational technical skills before advancing to higher-order clinical reasoning. While some suggested equal distribution across domains, the weighting reflects the focus on competencies essential at the trainee level.

Approximately 82% of validators expressed their belief in the potential applicability and adoption of the presented rubric tool in their respective clinical settings or regions. However, others remained neutral in their response, and this overall positive sentiment suggests a promising outlook for the rubric’s practical implementation.

Discussion

A wide range of assessment methods16 are available in the field of medical education, including essay questions, patient management problems, modified essay questions, checklists, objective structured clinical examinations, student projects, constructed response questions, multiple choice questions, critical reading papers, rating scales, extended matching items, tutor reports, portfolios, short and long case assessments, logbooks, trainer’s reports, audits, simulated patient surgeries, video assessments, simulators, self-assessment, peer assessment and standardized patients. Each of these approaches brings its own strengths and limitations, contributing to the diversity of assessment strategies. In this landscape of assessment methodologies, rubric-based evaluation emerges as a versatile approach that streamlines assessment complexities and offers a framework for clarity and consistency. It stands out as a viable solution for the multifaceted challenges associated with evaluation.9 A rubric is a structured tool that defines the assessment criteria and educational expectations and provides guidance for learning and evaluation.17 It is a dynamic resource that guides the assessment process and facilitates a comprehensive feedback mechanism. These guidelines are instrumental in orienting trainees to accomplish tasks in accordance with protocols, enabling them to achieve higher levels on the competency scale. These predefined criteria improve the performance levels of trainees, making this assessment method beneficial for both learners and instructors.

Validity is a pivotal factor in enhancing the calibre of a rubric, functioning as a gauge to ascertain if it accurately measures the intended attributes. Therefore, this study undertook careful measures to formulate questions that draw insights from pertinent literature15,18 on rubric development and validation. This meticulous approach aimed to refine the characteristics of the rubric, aligning it more closely with its fundamental assessment objectives.

This study assessed the positive (86%) and negative (12%) suggestions provided by the validators. the authors discussed the suggested modifications and incorporated the changes that they agreed on unanimously. simple language, underscoring the importance of obtaining consent before the OCT procedure, patient alignment with the instrument and providing clear instructions about the purpose of the test and its steps are some of the key recommendations included in the rubric. Moreover, the validators emphasized that trainees must possess knowledge about the potential image artefacts to enhance their comprehension and interpretation of OCT scans.

In response to the validator’s comment, the rubric was revised to encompass tasks related to identifying different sources of artifacts,7,19 namely patient-related, device-related and examiner-related. Moreover, the investigators agreed that it is important to assess a student’s ability to select an appropriate scanning protocol. Commonly used scanning protocols in OCT retinal imaging, such as the three-dimensional cube, radial and raster scans, were included as examples in rubric.7 The rubric also incorporated the time factor, wherever time measurement is extensively utilized, aligning with existing literature in medical education for assessing competence in training for medical procedures.20

The interpretation of findings necessitates advanced diagnostic skills, requiring the application of prior knowledge and understanding of the disease pathology to differentiate the abnormal findings from normal. the authors acknowledge that proficiencies lie within both the procedure and accuracy of the procedure domains, which are equally vital to ensure precise result interpretation. However, in the training phase, the students focus primarily on mastering the procedure, as inaccuracies in the execution of the procedure can adversely impact result interpretation. Therefore, the procedure and accuracy of procedure domains were assigned higher weightage compared to interpretation. While the rubric allocated different weightage to the five domains based on their importance and expected learning outcomes, equal scores were assigned to items or steps within the individual domains (e.g., steps under the clinical etiquette domain). This decision was based on the interrelation of these aspects, highlighting their collective contribution to a holistic understanding.

Limitations of the study

The inclusion of international validators for face and content validations is a notable strength, as it ensures diverse perspectives from experts with combined experience in clinical practice and training. However, the relatively small sample size of participants, despite their representation from diverse countries, is a limitation. This limitation arises from the study’s selective inclusion criteria, focussing exclusively on experts in retinal imaging who are actively involved in teaching and training students. Future studies should address this by ensuring broader representation to enhance the generalizability and further validation of the findings.

Conclusion

While an assessment rubric has been previously introduced in ophthalmology training,21-24 our study has developed a rubric specifically tailored for retinal OCT. We recommend that practitioners consider using this tool as a foundational reference, adapting it to their unique practice settings. The retinal OCT rubric that has been developed and validated in this study provides a promising avenue for assessing the proficiency of trainees in performing and interpreting retinal OCT scans. The implementation of this analytics-based rubric has the potential to significantly enhance the quality of training and positively impact the overall standards of patient care.

Acknowledgements

The authors wish to thank all the study participants who accepted the invitation and participated in the process of validation of study rubric. The authors wish to acknowledge Abhinav Sekar, the scientific editor, and members of the education team at LV Prasad Eye Institute for their feedback and support. We sincerely acknowledge the strong collaboration between LV Prasad Eye Institute, Hyderabad, India, and Singapore Polytechnic, Singapore. We also extend our gratitude to the Standard Chartered – LVPEI Academy for Eye Care Education for their support.

References

- World Health Organization World Report on Vision [Internet]. England: International Agency for the Prevention of Blindness; c2019. Available from: https://www.iapb.org/learn/resources/the-world-report-on-vision/

- Saleh GA, Batouty NM, Haggag S, et al. The Role of Medical Image Modalities and AI in the Early Detection, Diagnosis and Grading of Retinal Diseases: A Survey. Bioengineering (Basel) 2022 Aug;9(8). doi: 10.3390/bioengineering9080366

- Kwan CC, Fawzi AA. Imaging and Biomarkers in Diabetic Macular Edema and Diabetic Retinopathy. Curr Diab Rep. 2019 Aug 31;19(10):95. doi: 10.1007/s11892-019-1226-2

- Prakasam RK, Matuszewska-Iwanicka A, Fischer DC, et al. Thickness of Intraretinal Layers in Patients with Type 2 Diabetes Mellitus Depending on a Concomitant Diabetic Neuropathy: Results of a Cross-Sectional Study Using Deviation Maps for OCT Data Analysis. Biomedicines. 2020 Jul 2;8(7). doi: 10.3390/biomedicines8070190

- Murthy RK, Haji S, Sambhav K, Grover S, Chalam KV. Clinical applications of spectral domain optical coherence tomography in retinal diseases. Biomed J. 2016 Apr;39(2):107-20. doi: 10.1016/j.bj.2016.04.003

- Aumann S, Donner S, Fischer J, Muller F. Optical Coherence Tomography (OCT): Principle and Technical Realization. In: Bille JF, editor. High Resolution Imaging in Microscopy and Ophthalmology: New Frontiers in Biomedical Optics. Cham: Springer International Publishing; 2019. Pp. 59-85.

- Bhende M, Shetty S, Parthasarathy MK, et al. Optical coherence tomography: A guide to interpretation of common macular diseases. Indian journal of ophthalmology 2018;66(1):20-35. doi: 10.4103/ijo.IJO_902_17 doi: 10.1007/978-3-030-16638-0_3

- Chandra S, Rasheed R, Sen P, Menon D, Sivaprasad S. Inter-rater reliability for diagnosis of geographic atrophy using spectral domain OCT in age-related macular degeneration. Eye (Lond) 2022 Feb;36(2):392-97. doi: 10.1038/s41433-021-01490-5

- O’Donnell JA, Oakley M, Haney S, O’Neill PN, Taylor, D. Rubrics 101: a primer for rubric development in dental education. J Dent Educ. 2011 Sep;75(9):1163-75.

- Ferrara V, De Santis S, Melchiori FM. Art for improving skills in medical education: the validation of a scale for measuring the Visual Thinking Strategies method. Clin Ter. 2020 May-Jun;171(3):e252-e59. doi: 10.7417/ct.2020.2223

- Kondo T, Nishigori H, van der Vleuten C. Locally adapting generic rubrics for the implementation of outcome-based medical education: a mixed-methods approach. BMC Med Educ. 2022 Apr;22(1):262. doi: 10.1186/s12909-022-03352-4

- Nisbet G, Jorm C, Roberts C, Gordon CJ, Chen TF. Content validation of an interprofessional learning video peer assessment tool. BMC Med Educ. 2017 Dec;17(1):258. doi: 10.1186/s12909-017-1099-5

- Yune SJ, Lee SY, Im SJ, Kam BS, Baek SY. Holistic rubric vs. analytic rubric for measuring clinical performance levels in medical students. BMC Med Educ. 2018 Jun 5;18(1):124. doi: 10.1186/s12909-018-1228-9

- Dreyfus, SE, Dreyfus H. A Five-Stage Model of the Mental Activities Involved in Directed Skill Acquisition. Distribution. 1980 Feb.

- Moskal B, Leydens J. Scoring Rubric Development: Validity and Reliability. Practical Assessment Research and Evaluation. 2000 Jan;7.

- Syed AT. Assessment methods in medical education. Int J Health Sci (Qassim). 2008 Jul;2(2):3-7.

- Blommel ML, Abate MA. A rubric to assess critical literature evaluation skills. Am J Pharm Educ. 2007 Aug 15;71(4):63. doi:10.5688/aj710463

- Reddy YM, Andrade H. A review of rubric use in higher education. Assessment & Evaluation in Higher Education. 2010 Jul;35(4):435-48. doi: 10.1080/02602930902862859

- Chhablani J, Krishnan T, Sethi V, Kozak, I. Artifacts in optical coherence tomography. Saudi J Ophthalmol. 2014 Apr;28(2):81-87. Doi: 10.1016/j.sjopt.2014.02.010

- Pusic MV, Brydges R, Kessler D, Szyld D, Nachbar M, Kalet A. What’s your best time? Chronometry in the learning of medical procedures. Med Educ. 2014 May;48(5):479-88. doi: 10.1111/medu.12395

- Green CM, Salim S, Edward DP, Mudumbai RC, Golnik K. The Ophthalmology Surgical Competency Assessment Rubric for Trabeculectomy. J Glaucoma 2017 Sep;26(9):805-09. doi: 10.1097/ijg.0000000000000723

- Juniat V, Golnik KC, Bernardini FP, et al. The Ophthalmology Surgical Competency Assessment Rubric (OSCAR) for anterior approach ptosis surgery. Orbit. 2018 Dec;37(6):401-04. doi: 10.1080/01676830.2018.1437754

- Justin GA, Soleimani M, Zafar S, et al. The Ophthalmology Surgical Competency Assessment Rubric (OSCAR) for Open Globe Surgical Management. Clin Ophthalmol. 2022 Jun 21;16:2041-46. doi: 10.2147/opth.S354853

- Law JC, Golnik KC, Cherney EF, Arevalo JF, Li Xiaoxin, Ramsamy K. The Ophthalmology Surgical Competency Assessment Rubric for Panretinal Photocoagulation. Ophthalmol Retina. 2018 Feb;2(2):162-67. doi: 10.1016/j.oret.2017.06.002